Analyzing Classful IPv4 Networks

INTERNOLD NETWORKS CCNA LIVE WEBCLASS (INCLW)

Analyzing Classful IPv4 Networks

Analyzing Classful IPv4 Networks

When operating a network, you often start investigating a problem based on an IP address and mask. Based on the IP address alone, you should be able to determine several facts about the Class A, B, or C network in which the IP address resides. These facts can be useful when troubleshooting some networking problems.

This lesson lists the key facts about classful IP networks and explains how to discover these facts. Following that, this lesson lists some practice problems. Before moving to the next lesson, you should practice until you can consistently determine all these facts, quickly and confidently, based on an IP address.

Classful Network Concepts

Imagine that you have a job interview for your first IT job. As part of the interview, you’re given an IPv4 address and mask: 10.4.5.99, 255.255.255.0. What can you tell the interviewer about the classful network (in this case, the Class A network) in which the IP address resides?

This section, the first of two major sections in this lesson, reviews the concepts of classful IP networks (in other words, Class A, B, and C networks). In particular, this lesson examines how to begin with a single IP address and then determine the following facts:

- Class (A, B, or C)

- Default mask

- Number of network octets/bits

- Number of host octets/bits

- Number of host addresses in the network

- Network ID

- Network broadcast address

- First and last usable address in the network

IPv4 Network Classes and Related Facts

IP version 4 (IPv4) defines five address classes. Three of the classes, Classes A, B, and C, consist of unicast IP addresses. Unicast addresses identify a single host or interface so that the address uniquely identifies the device. Class D addresses serve as multicast addresses, so that one packet sent to a Class D multicast IPv4 address can actually be delivered to multiple hosts. Finally, Class E addresses were originally intended for experimentation, but were changed to simply be reserved for future use. The class can be identified based on the value of the first octet of the address, as shown in Table 1.

Table 1: IPv4 Address Classes Based on First Octet Values

After you identify the class as either A, B, or C, many other related facts can be derived just through memorization. Table 2 lists that information for reference and later study; each of these concepts is described in this lesson.

Table 2: Key Facts for Classes A, B, and C

At times, some people today look back and wonder, “Are there 128 class A networks, with two reserved networks, or are there truly only 126 class A networks?” Frankly, the difference is unimportant, and the wording is just two ways to state the same idea. The important fact to know is that Class A network 0.0.0.0 and network 127.0.0.0 are reserved. In fact, they have been reserved since the creation of Class A networks, as listed in RFC 791 (published in 1981).

Although it may be a bit of a tangent, what is more interesting today is that over time, other newer RFCs have also reserved small pieces of the Class A, B, and C address space. So, tables like Table 2, with the count of the numbers of Class A, B, and C networks, are a good place to get a sense of the size of the number; however, the number of reserved networks does change slightly over time (albeit slowly) based on these other reserved address ranges.

NOTE: If you are interested in seeing all the reserved IPv4 address ranges, just do an Internet search on “IANA IPv4 special-purpose address registry.”

The Number and Size of the Class A, B, and C Networks

Table 2 lists the range of Class A, B, and C network numbers; however, some key points can be lost just referencing a table of information. This section examines the Class A, B, and C network numbers, focusing on the more important points and the exceptions and unusual cases.

First, the number of networks from each class significantly differs. Only 126 Class A networks exist: network 1.0.0.0, 2.0.0.0, 3.0.0.0, and so on, up through network 126.0.0.0. However, 16,384 Class B networks exist, with more than 2 million Class C networks.

Next, note that the size of networks from each class also significantly differs. Each Class A network is relatively large—over 16 million host IP addresses per network—so they were originally intended to be used by the largest companies and organizations. Class B networks are smaller, with over 65,000 hosts per network. Finally, Class C networks, intended for small organizations, have 254 hosts in each network. Figure 1 summarizes those facts.

Figure 1: Numbers and Sizes of Class A, B, and C Networks

Address Formats

In some cases, an engineer might need to think about a Class A, B, or C network as if the network has not been subdivided through the subnetting process. In such a case, the addresses in the classful network have a structure with two parts: the network part (sometimes called the prefix) and the host part. Then, comparing any two IP addresses in one network, the following observations can be made:

- The addresses in the same network have the same values in the network part.

- The addresses in the same network have different values in the host part.

For example, in Class A network 10.0.0.0, by definition, the network part consists of the first octet. As a result, all addresses have an equal value in the network part, namely a 10 in the first octet. If you then compare any two addresses in the network, the addresses have a different value in the last three octets (the host octets). For example, IP addresses 10.1.1.1 and 10.1.1.2 have the same value (10) in the network part, but different values in the host part.

Figure 2 shows the format and sizes (in number of bits) of the network and host parts of IP addresses in Class A, B, and C networks, before any subnetting has been applied.

Figure 2: Sizes (Bits) of the Network and Host Parts of Unsubnetted Classful Networks

Default Masks

Although we humans can easily understand the concepts behind Figure 2, computers prefer numbers. To communicate those same ideas to computers, each network class has an associated default mask that defines the size of the network and host parts of an unsubnetted Class A, B, and C network. To do so, the mask lists binary 1s for the bits considered to be in the network part and binary 0s for the bits considered to be in the host part.

For example, Class A network 10.0.0.0 has a network part of the first single octet (8 bits) and a host part of last three octets (24 bits). As a result, the Class A default mask is 255.0.0.0, which in binary is

11111111 00000000 00000000 00000000

Figure 3 shows default masks for each network class, both in binary and dotted-decimal format.

Figure 3: Default Masks for Classes A, B, and C

NOTE: Decimal 255 converts to the binary value 11111111. Decimal 0, converted to 8-bit binary, is 00000000. See “Numeric Reference Tables,” for a conversion table.

Number of Hosts per Network

Calculating the number of hosts per network requires some basic binary math. First, consider a case where you have a single binary digit. How many unique values are there? There are, of course, two values: 0 and 1. With 2 bits, you can make four combinations: 00, 01, 10, and 11. As it turns out, the total combination of unique values you can make with N bits is 2N.

Host addresses—the IP addresses assigned to hosts—must be unique. The host bits exist for the purpose of giving each host a unique IP address by virtue of having a different value in the host part of the addresses. So, with H host bits, 2H unique combinations exist.

However, the number of hosts in a network is not 2H; instead, it is 2H – 2. Each network reserves two numbers that would have otherwise been useful as host addresses, but have instead been reserved for special use: one for the network ID and one for the network broadcast address. As a result, the formula to calculate the number of host addresses per Class A, B, or C network is

2H – 2

where H is the number of host bits.

Deriving the Network ID and Related Numbers

Each classful network has four key numbers that describe the network. You can derive these four numbers if you start with just one IP address in the network. The numbers are as follows:

- Network number

- First (numerically lowest) usable address

- Last (numerically highest) usable address

- Network broadcast address

First, consider both the network number and first usable IP address. The network number, also called the network ID or network address, identifies the network. By definition, the network number is the numerically lowest number in the network. However, to prevent any ambiguity, the people that made up IP addressing added the restriction that the network number cannot be assigned as an IP address. So, the lowest number in the network is the network ID. Then, the first (numerically lowest) host IP address is one larger than the network number.

Next, consider the network broadcast address along with the last (numerically highest) usable IP address. The TCP/IP RFCs define a network broadcast address as a special address in each network. This broadcast address could be used as the destination address in a packet, and the routers would forward a copy of that one packet to all hosts in that classful network. Numerically, a network broadcast address is always the highest (last) number in the network. As a result, the highest (last) number usable as an IP address is the address that is simply one less than the network broadcast address.

Simply put, if you can find the network number and network broadcast address, finding the first and last usable IP addresses in the network is easy. For the exam, you should be able to find all four values with ease; the process is as follows:

Step 1. Determine the class (A, B, or C) based on the first octet.

Step 2. Mentally divide the network and host octets based on the class.

Step 3. To find the network number, change the IP address’s host octets to 0.

Step 4. To find the first address, add 1 to the fourth octet of the network ID.

Step 5. To find the broadcast address, change the network ID’s host octets to 255.

Step 6. To find the last address, subtract 1 from the fourth octet of the network broadcast address.

The written process actually looks harder than it is. Figure 4 shows an example of the process, using Class A IP address 10.17.18.21, with the circled numbers matching the process.

Figure 4: Example of Deriving the Network ID and Other Values from 10.17.18.21

Figure 4 shows the identification of the class as Class A (Step 1) and the number of network/host octets as 1 and 3, respectively. So, to find the network ID at Step 3, the figure copies only the first octet, setting the last three (host) octets to 0. At Step 4, just copy the network ID and add 1 to the fourth octet. Similarly, to find the broadcast address at Step 5, copy the network octets, but set the host octets to 255. Then, at Step 6, subtract 1 from the fourth octet to find the last (numerically highest) usable IP address.

Just to show an alternative example, consider IP address 172.16.8.9. Figure 5 shows the process applied to this IP address.

Figure 5: Example Deriving the Network ID and Other Values from 172.16.8.9

Figure 5 shows the identification of the class as Class B (Step 1) and the number of network/host octets as 2 and 2, respectively. So, to find the network ID at Step 3, the figure copies only the first two octets, setting the last two (host) octets to 0. Similarly, Step 5 shows the same action, but with the last two (host) octets being set to 255.

Unusual Network IDs and Network Broadcast Addresses

Some of the more unusual numbers in and around the range of Class A, B, and C network numbers can cause some confusion. This section lists some examples of numbers that make many people make the wrong assumptions about the meaning of the number.

For Class A, the first odd fact is that the range of values in the first octet omits the numbers 0 and 127. As it turns out, what would be Class A network 0.0.0.0 was originally reserved for some broadcasting requirements, so all addresses that begin with 0 in the first octet are reserved. What would be Class A network 127.0.0.0 is still reserved because of a special address used in software testing, called the loopback address (127.0.0.1).

For Class B (and C), some of the network numbers can look odd, particularly if you fall into a habit of thinking that 0s at the end means the number is a network ID, and 255s at the end means it’s a network broadcast address. First, Class B network numbers range from 128.0.0.0 to 191.255.0.0, for a total of 214 networks. However, even the very first (lowest number) Class B network number (128.0.0.0) looks a little like a Class A network number, because it ends with three 0s. However, the first octet is 128, making it a Class B network with a two-octet network part (128.0).

For another Class B example, the high end of the Class B range also might look strange at first glance (191.255.0.0), but this is indeed the numerically highest of the valid Class B network numbers. This network’s broadcast address, 191.255.255.255, might look a little like a Class A broadcast address because of the three 255s at the end, but it is indeed the broadcast address of a Class B network.

Similarly to Class B networks, some of the valid Class C network numbers do look strange. For example, Class C network 192.0.0.0 looks a little like a Class A network because of the last three octets being 0, but because it is a Class C network, it consists of all addresses that begin with three octets equal to 192.0.0. Similarly, Class C network 223.255.255.0, another valid Class C network, consists of all addresses that begin with 223.255.255.

Practice with Classful Networks

As with all areas of IP addressing and subnetting, you need to practice to be ready for the CCENT and CCNA Routing and Switching exams. You should practice some while reading this lesson to make sure that you understand the processes. At that point, you can use your notes and this course as a reference, with a goal of understanding the process. After that, keep practicing this and all the other subnetting processes. Before you take the exam, you should be able to always get the right answer, and with speed. Table 3 summarizes the key concepts and suggestions for this two-phase approach.

Table 3: Keep-Reading and Take-Exam Goals for This Lesson’s Topics

Practice Deriving Key Facts Based on an IP Address

Practice finding the various facts that can be derived from an IP address, as discussed throughout this lesson. To do so, complete Table 4.

Table 4: Practice Problems: Find the Network ID and Network Broadcast

The answers are listed in the section “Answers to Earlier Practice Problems,” later in this lesson.

Practice Remembering the Details of Address Classes

Tables 1 and 2, shown earlier in this lesson, summarized some key information about IPv4 address classes. Tables 5 and 6 show sparse versions of these same tables. To practice recalling those key facts, particularly the range of values in the first octet that identifies the address class, complete these tables. Then, refer to Tables 1 and 2 to check your answers. Repeat this process until you can recall all the information in the tables.

Table 5: Sparse Study Table Version of Table 1

Table 6: Sparse Study Table Version of Table 2

INCLW01 Week 2 Practice Quiz

INTERNOLD NETWORKS CCNA LIVE WEBCLASS (INCLW)

week 2 practice quiz

Read the question carefully and choose the best answer(s).

Note: This is a mini-quiz of 10 questions per attempt. Always click 'Try Again' to get a new sets of questions.

Miscellaneous LAN Topics

INTERNOLD NETWORKS CCNA LIVE WEBCLASS (INCLW)

Miscellaneous LAN Topics

Miscellaneous LAN Topics

Between this book and the ICND1 100-105 Cert Guide, 14 chapters have been devoted to topics specific to LANs. This chapter is the last of those chapters. This chapter completes the LAN-specific discussion with a few small topics that just do not fit neatly in the other chapters.

The chapter begins with three security topics. The first section addresses IEEE 802.1x, which defines a mechanism to secure user access to a LAN by requiring the user to supply a username and password before a switch allows the device’s sent frames into the LAN. This tool helps secure the network against attackers gaining access to the network. The second section, “AAA Authentication,” discusses network device security, protecting router and switch CLI access by requiring username/password login with an external authentication server. The third section, “DHCP Snooping,” explores how switches can prevent security attacks that take advantage of DHCP messages and functions. By watching DHCP messages, noticing when they are used in abnormal ways not normally done with DHCP, DHCP can prevent attacks by simply filtering certain DHCP messages.

The final of the four major sections in this chapter looks at two similar design tools that make multiple switches act like one switch: switch stacking and chassis aggregation. Switch stacking allows a set of similar switches that sit near to each other (in the same wiring closet, typically in the same part of the same rack) to be cabled together and then act like a single switch. Using a switch stack greatly reduces the amount of work to manage the switch, and it reduces the overhead of control and management protocols used in the network. Switch chassis aggregation has many of the same benefits, but is supported more often as a distribution or core switch feature, with switch stacking as a more typical access layer switch feature.

All four sections of this chapter have a matching exam topic that uses the verb “describe,” so this chapter touches on the basic descriptions of a tool, rather than deep configuration. A few of the topics will show some configuration as a means to describe the topic, but the chapter is meant to help you come away with an understanding of the fundamentals, rather than an ability to configure the features.

Securing Access with IEEE 802.1x

In some enterprise LANs, the LAN is built with cables run to each desk, in every cubicle and every office. When you move to a new space, all you have to do is connect a short patch cable from your PC to the RJ-45 socket on the wall and you are connected to the network. Once booted, your PC can send packets anywhere in the network. Security? That happens mostly at the devices you try to access, for instance, when you log in to a server.

That previous paragraph is true of how many networks work. That attitude views the network as an open highway between the endpoints in the network, and the network is there to create connectivity, with high availability, and to make it easy to connect your device. Those goals are worthy goals. However, making the LAN accessible to anyone, so that anyone can attempt to connect to servers in the network, allows attackers to connect and then try and break in to the security that protects the server. That approach may be too insecure. For instance, any attacker who could gain physical access could plug in his laptop and start running tools to try to exploit all those servers attached to the internal network.

Today, many companies secure access to the network. Sure, they begin by creating basic connectivity: cabling the LAN and connecting cables to all the desks at all the cubicles and offices. All those ports physically work. But a user cannot just plug in her PC and start working; she must go through a security process before the LAN switch will allow the user to send any other messages in the network.

Switches can use IEEE standard 802.1x to secure access to LAN ports. To set up the feature, the LAN switches must be configured to enable 802.1x. Additionally, the IT staff must implement an authentication, authorization, and accounting (AAA) server. The AAA server (commonly pronounced “triple A” server) will keep the usernames and passwords, and when the user supplies that information, it is the AAA server that determines if what the user typed was correct or not.

Once implemented, the LAN switch acts as an 802.1x authenticator, as shown in Figure 6-1. As an 802.1x authenticator, a switch can be configured to enable some ports for 802.1x, most likely the access ports connected to end users. Enabling a port for 802.1x defines that when the port first comes up, the switch filters all incoming traffic (other than 802.1x traffic). 802.1x must first authenticate the user that uses that device.

Switch as 802.1x Authenticator, with AAA Server, and PC Not Yet Connected

Note that the switch usually configures access ports that connect to end users with 802.1x, but does not enable 802.1x on ports connected to IT-controlled devices, such as trunk ports, or ports connected in parts of the network that are physically more secure.

The 802.1x authentication process works like the flow in Figure 6-2. Once the PC connects and the port comes up, the switch uses 802.1x messages to ask the PC to supply a username/password. The PC user must then supply that information. For that process to work, the end-user device must be using an 802.1x client called a supplicant; many OSs include an 802.1x supplicant, so it may just be seen as a part of the OS settings.

Generic 802.1x Authentication Flows

At Steps 3 and 4 in Figure 6-2, the switch authenticates the user, to find out if the username and password combination is legitimate. The switch, acting as 802.1x authenticator, asks the AAA server if the supplied username and password combo is correct, with the AAA server answering back. If the username and password were correct, then the switch authorizes the port. Once authorized, the switch no longer filters incoming messages on that port. If the username/password check shows that the username/password was incorrect, or the process fails for any reason, the port remains in an unauthorized state. The user can continue to retry the attempt.

Figure 6-3 rounds out this topic by showing an example of one key protocol used by 802.1x: Extensible Authentication Protocol (EAP). The switch (the authenticator) uses RADIUS between itself and the AAA server, which itself uses IP and UDP. However, 802.1x, an Ethernet protocol, does not use IP or UDP. But 802.1x wants to exchange some authentication information all the way to the RADIUS AAA server. The solution is to use EAP, as shown in Figure 6-3.

EAP and Radius Protocol Flows with 802.1x

As shown in the figure, the EAP message flows from the supplicant to the authentication server, just in different types of messages. The flow from the supplicant (the end-user device) to the switch transports the EAP message directly in an Ethernet frame with an encapsulation called EAP over LAN (EAPoL). The flow from the authenticator (switch) to the authentication server flows in an IP packet. In fact, it looks much like a normal message used by the RADIUS protocol (RFC 2865). The RADIUS protocol works as a UDP application, with an IP and UDP header, as shown in the figure.

Now that you have heard some of the details and terminology, this list summarizes the entire process:

A AAA server must be configured with usernames and passwords.

Each LAN switch must be enabled for 802.1x, to enable the switch as an authenticator, to configure the IP address of the AAA server, and to enable 802.1x on the required ports.

Users must know a username/password combination that exists on the AAA server, or they will not be able to access the network from any device.

AAA Authentication

The ICND1 100-105 Cert Guide discusses many details about device management, in particular how to secure network devices. However, all those device security methods shown in the ICND1 half of the CCNA R&S exam topics use locally configured information to secure the login to the networking device.

Using locally configured usernames and passwords configured on the switch causes some administrative headaches. For instance, every switch and router needs the configuration for all users who might need to log in to the devices. Good security practices tell us to change our passwords regularly, but logging in to hundreds of devices to change passwords is a large task, so often, the passwords remain unchanged for long periods.

A better option would be to use an external AAA server. The AAA server centralizes and secures all the username/password pairs. The switches and routers still need some local security configuration to refer to the AAA server, but the username/password exist centrally, greatly reducing the administrative effort, and increasing the chance that passwords are changed regularly and are more secure. It is also easier to track which users logged in to which devices and when, and to revoke access as people leave their current job.

This short section discusses the basics of how networking devices can use a AAA server.

AAA Login Process

First, to use AAA, the site would need to install and configure a AAA server, such as the Cisco Access Control Server (ACS). Cisco ACS is AAA software that you can install on your own server (physical or virtual).

The networking devices would each then need new configuration to tell the device to start using the AAA server. That configuration would point to the IP address of the AAA server, and define which AAA protocol to use: either TACACS+ or RADIUS. The configuration includes details about TCP (TACACS+) or UDP (RADIUS) ports to use.

When using a AAA server for authentication, the switch (or router) simply sends a message to the AAA server asking whether the username and password are allowed, and the AAA server replies. Figure 6-4 shows an example, with the user first supplying his username/password, the switch asking the AAA server, and the server replying to the switch stating that the username/password is valid.

Basic Authentication Process with an External AAA Server

TACACS+ and RADIUS Protocols

While Figure 6-4 shows the general idea, note that the information flows with a couple of different protocols. On the left, the connection between the user and the switch or router uses Telnet or SSH. On the right, the switch and AAA server typically use either the RADIUS or TACACS+ protocol, both of which encrypt the passwords as they traverse the network.

The AAA server can also provide authorization and accounting features as well. For instance, for networking devices, IOS can be configured so that each user can be allowed to use only a specific subset of CLI commands. So, instead of having basically two levels of authority—user mode and privileged mode—each device can configure a custom set of command authority settings per user. Alternately, those details can be centrally configured at the AAA server, rather than configuring the details at each device. As a result, different users can be allowed to use different subsets of the commands, but as identified through requests to the AAA server, rather than repetitive laborious configuration on every device. (Note that TACACS+ supports this particular command authorization function, whereas RADIUS does not.)

Table 6-2 lists some basic comparison points between TACACS+ and RADIUS.

Comparisons Between TACACS+ and RADIUS

AAA Configuration Examples

Learning how to configure a router or switch to use a AAA server can be difficult. AAA requires that you learn several new commands. Besides all that, enabling AAA actually changes the rules used on a router for login authentication—for instance, you cannot just add a login command on the console line anymore after you have enabled AAA.

The exam topics use the phrase “describe” regarding AAA features, rather than configure, verify, or troubleshoot. However, to understand AAA on switches or routers, it helps to work through an example configuration. This next topic focuses on the big ideas behind a AAA configuration, instead of worrying about working through all the parameters, verifying the results, or memorizing a checklist of commands. The goal is to help you see how AAA on a switch or router changes login security.

NOTE: Throughout this book and the ICND1 Cert Guide, the login security details work the same on both routers and switches, with the exception that switches do not have an auxiliary port, whereas routers often do. But the configuration works the same on both routers and switches, so when this section mentions a switch for login security, the same concept applies to routers as well.

Everything you learned about switch login security for ICND1 in the ICND1 Cert Guide assumed an unstated default global command: no aaa new-model. That is, you had not yet added the aaa new-model global command to the configuration. Configuring AAA requires the aaa new-model command, and this single global command changes how that switch does login security.

The aaa new-model global command enables AAA services in the local switch (or router). It even enables new commands, commands that would not have been accepted before, and that would not have shown up when getting help with a question mark from the CLI. The aaa new-model command also changes some default login authentication settings. So, think of this command as the dividing line between using the original simple ways of login security versus a more advanced method.

After configuring the aaa new-model command on a switch, you need to define each AAA server, plus configure one or more groups of AAA servers aptly named a AAA group. For each AAA server, configure its IP address and a key, and optionally the TCP or UDP port number used, as seen in the middle part of Figure 6-5. Then you create a server group for each group of AAA servers to group one or more AAA servers, as seen in the bottom of Figure 6-5. (Other configuration settings will then refer to the AAA server group rather than the AAA server.)

Enabling AAA and Defining AAA Servers and Groups

The configuration concepts in Figure 6-5 still have not completed the task of configuring AAA authentication. IOS uses the following additional logic to connect the rest of the logic:

IOS does login authentication for the console, vty, and aux port, by default, based on the setting of the aaa authentication login default global command.

The aaa authentication login default method1 method2... global command lists different authentication methods, including referencing a AAA group to be used (as shown at the bottom of Figure 6-5).

The methods include: a defined AAA group of AAA servers; local, meaning a locally configured list of usernames/passwords; or line, meaning to use the password defined by the password line subcommand.

Basically, when you want to use AAA for login authentication on the console or vty lines, the most straightforward option uses the aaa authentication login default command. As Figure 6-6 shows with this command, it lists multiple authentication methods. The switch tries the first method, and if that method returns a definitive answer, the process is done. However, if that method is not available (for instance, none of the AAA servers is reachable over the IP network), IOS on the local device moves on to the next method.

Default Login Authentication Rules

The idea of defining at least a couple of methods for login authentication makes good sense. For instance, the first method could be a AAA group so that each engineer logs in to each device with that engineer’s unique username and password. However, you would not want the engineer to fail to log in just because the IP network is having problems and the AAA servers cannot send packets back to the switch. So, using a backup login method (a second method listed in the command) makes good sense.

Figure 6-7 shows three sample commands for perspective. All three commands reference the same AAA group (WO-AAA-Group). The command labeled with a 1 in the figure takes a shortsighted approach, using only one authentication method with the AAA group. Command 2 in the figure uses two authentication methods: one with AAA and a second method (local). (This command’s local keyword refers to the list of local username commands as configured on the local switch.) Command 3 in the figure again uses a AAA group as the first method, followed by the keyword login, which tells IOS to use the password line subcommand.

Examples of AAA Login Authentication Method Combinations

DHCP Snooping

To understand the kinds of risks that exist in modern networks, you have to first understand the rules. Then you have to think about how an attacker might take advantage of those rules in different ways. Some attacks might cause harm, and might be called a denial-of-service (DoS) attack. Or an attacker may gather more data to prepare for some other attack. Whatever the goal, for every protocol and function you learn in networking, there are possible methods to take advantage of those features to give an attacker an advantage.

Cisco chose to add one exam topic for this current CCNA R&S exam that focuses on mitigating attacks based on a specific protocol: DHCP. DHCP has become a very popular protocol, used in most every enterprise, home, and service provider. As a result, attackers have looked for methods to take advantage of DHCP. One way to help mitigate the risks of DHCP is to use a LAN switch feature called DHCP snooping.

This third of four major sections of the chapter works through the basics of DHCP snooping. It starts with the main idea, and then shows one example of how an attacker can misuse DHCP to gain an advantage. The last section explains the logic used by DHCP snooping.

DHCP Snooping Basics

DHCP snooping on a switch acts like a firewall or an ACL in many ways. It will watch for incoming messages on either all ports or some ports (depending on the configuration). It will look for DHCP messages, ignoring all non-DHCP messages and allowing those through. For any DHCP messages, the switch’s DHCP snooping logic will make a choice: allow the message or discard the message.

To be clear, DHCP snooping is a Layer 2 switch feature, not a router feature. Specifically, any switch that performs Layer 2 switching, whether it does only Layer 2 switching or acts as a multilayer switch, typically supports DHCP snooping. DHCP snooping must be done on a device that sits between devices in the same VLAN, which is the role of a Layer 2 switch rather than a Layer 3 switch or router.

The first big idea with DHCP snooping is the idea of trusted ports and untrusted ports. To understand why, ponder for a moment all the devices that might be connected to one switch. The list includes routers, servers, and even other switches. It includes end-user devices, such as PCs. It includes wireless access points, which in turn connect to end-user devices. Figure 6-8 shows a representation.

DHCP Snooping Basics: Client Ports Are Untrusted

DHCP snooping begins with the assumption that end-user devices are untrusted, while devices more within the control of the IT department are trusted. However, a device on an untrusted port can still use DHCP. Instead, making a port untrusted for DHCP snooping means this:

Watch for incoming DHCP messages, and discard any that are considered to be abnormal for an untrusted port and therefore likely to be part of some kind of attack.

An Example DHCP-based Attack

To give you perspective, Figure 6-9 shows a legitimate user’s PC on the far right and the legitimate DHCP sever on the far left. However, an attacker has connected his laptop to the LAN and started his DHCP attack. Remember, PC1’s first DHCP message will be a LAN broadcast, so the attacker’s PC will receive those LAN broadcasts from any DHCP clients like PC1. (In this case, assume PC1 is attempting to lease an IP address while the attacker is making his attack.)

DHCP Attack Supplies Good IP Address but Wrong Default Gateway

In this example, the DHCP server created and used by the attacker actually leases a useful IP address to PC1, in the correct subnet, with the correct mask. Why? The attacker wants PC1 to function, but with one twist. Notice the default gateway assigned to PC1: 10.1.1.2, which is the attacker’s PC address, rather than 10.1.1.1, which is R1’s address. Now PC1 thinks it has all it needs to connect to the network, and it does—but now all the packets sent by PC1 flow first through the attacker’s PC, creating a man-in-the-middle attack, as shown in Figure 6-10.

Unfortunate Result: DHCP Attack Leads to Man-in-the-Middle

The two steps in the figure show data flow once DHCP has completed. For any traffic destined to leave the subnet, PC1 sends its packets to its default gateway, 10.1.1.2, which happens to be the attacker. The attacker forwards the packets to R1. The PC1 user can connect to any and all applications just like normal, but now the attacker can keep a copy of anything sent by PC1.

How DHCP Snooping Works

The preceding example shows just one attack. Some attacks use an extra DHCP server (called a spurious DHCP server), and some attacks happen by using DHCP client functions in different ways. DHCP snooping considers how DHCP should work and filters out any messages that would not be part of a normal use of DHCP.

DHCP snooping needs a few configuration settings. First, the engineer enables DHCP snooping either globally on a switch or by VLAN (that is, enabled on some VLANs, and not on others). Once enabled, all ports are considered untrusted until configured as trusted.

Next, some switch ports need to be configured as trusted. Any switch ports connected to legitimate DHCP servers should be trusted. Additionally, ports connected to other switches, and ports connected to routers, should also be trusted. Why? Trusted ports are basically ports that could receive messages from legitimate DHCP servers in the network. The legitimate DHCP servers in a network are well known.

Just for a quick review, the ICND1 Cert Guide described the DHCP messages used in normal DHCP lease flows (DISCOVER, OFFER, REQUEST, ACK [DORA]). For these and other DHCP messages, a message is normally sent by either a DHCP client or a server, but not both. In the DORA messages, the client sends the DISCOVER and REQUEST, and the server sends the OFFER and ACK. Knowing that only DHCP servers should send DHCP OFFER and ACK messages, DHCP snooping allows incoming OFFER and ACK messages on trusted ports, but filters those messages if they arrive on untrusted ports.

So, the first rule of DHCP snooping is for the switch to trust any ports on which legitimate messages from trusted DHCP servers might arrive. Conversely, by leaving a port untrusted, the switch is choosing to discard any incoming DHCP server-only messages. Figure 6-11 summarizes these points, with the legitimate DHCP server on the left, on a port marked as trusted.

Summary of Rules for DHCP Snooping

The logic for untrusted DHCP ports is a little more challenging. Basically, the untrusted ports are the real user population, all of which rely heavily on DHCP. Those ports also include those few people trying to attack the network with DHCP, and you cannot predict which of the untrusted ports have legitimate users and which are attacking the network. So the DHCP snooping function has to watch the DHCP messages over time, and even keep some state information in a DHCP Binding Table, so that it can decide when a DHCP message should be discarded.

The DHCP Binding Table is a list of key pieces of information about each successful lease of an IPv4 address. Each new DHCP message received on an untrusted port can then be compared to the DHCP Binding Table, and if the switch detects conflicts when comparing the DHCP message to the Binding Table, then the switch will discard the message.

To understand more specifically, first look at Figure 6-12, which shows a switch building one entry in its DHCP Binding Table. In this simple network, the DHCP client on the right leases IP address 10.1.1.11 from the DHCP server on the left. The switch’s DHCP snooping feature combines the information from the DHCP messages, with information about the port (interface F0/2, assigned to VLAN 11 by the switch), and puts that in the DHCP Binding Table.

Legitimate DHCP Client with DHCP Binding Entry Built by DHCP Snooping

Because of this DHCP binding table entry, DHCP snooping would now prevent another client on another switch port from claiming to be using that same IP address (10.1.1.11) or the same MAC address (2000.1111.1111). (Many DHCP client attacks will use the same IP address or MAC address as a legitimate host.)

Note that beyond firewall-like rules of filtering based on logic, DHCP snooping can also be configured to rate limit the number of DHCP messages on an interface. For instance, by rate limiting incoming DHCP messages on untrusted interfaces, DHCP snooping can help prevent a DoS attack designed to overload the legitimate DHCP server, or to consume all the available DHCP IP address space.

Summarizing DHCP Snooping Features

DHCP snooping can help reduce risk, particularly because DHCP is such a vital part of most networks. The following list summarizes some of the key points about DHCP snooping for easier exam study:

Trusted ports: Trusted ports allow all incoming DHCP messages.

Untrusted ports, server messages: Untrusted ports discard all incoming messages that are considered server messages.

Untrusted ports, client messages: Untrusted ports apply more complex logic for messages considered client messages. They check whether each incoming DHCP message conflicts with existing DHCP binding table information and, if so, discard the DHCP message. If the message has no conflicts, the switch allows the message through, which typically results in the addition of new DHCP Binding Table entries.

Rate limiting: Optionally limits the number of received DHCP messages per second, per port.

Switch Stacking and Chassis Aggregation

Cisco offers several options that allow customers to configure their Cisco switches to act cooperatively to appear as one switch, rather than as multiple switches. This final major section of the chapter discusses two major branches of these technologies: switch stacking, which is more typical of access layer switches, and chassis aggregation, more commonly found on distribution and core switches.

Traditional Access Switching Without Stacking

Imagine for a moment that you are in charge of ordering all the gear for a new campus, with several multistory office buildings. You take a tour of the space, look at drawings of the space, and start thinking about where all the people and computers will be. At some point, you get to the point of thinking about how many Ethernet ports you need in each wiring closet.

Imagine for one wiring closet you need 150 ports today, and you want to build enough switch port capacity to 200 ports. What size switches do you buy? Do you get one switch for the wiring closet, with at least 200 ports in the switch? (The books do not discuss various switch models very much, but yes, you can buy LAN switches with hundreds of ports in one switch.) Or do you buy a pair of switches with at least 100 ports each? Or eight or nine switches with 24 access ports each?

There are pros and cons for using smaller numbers of large switches, and vice versa. To meet those needs, vendors such as Cisco offer switches with a wide range of port densities. However, a switch feature called switch stacking gives you some of the benefits of both worlds.

To appreciate the benefits of switch stacking, imagine a typical LAN design like the one shown in Figure 6-13. The figure shows the conceptual design, with two distribution switches and four access layer switches.

Typical Campus Design: Access Switches and Two Distribution Switches

For later comparison, let me emphasize a few points here. Access switches A1 through A4 all operate as separate devices. The network engineer must configure each. They each have an IP address for management. They each run CDP, STP, and maybe VTP. They each have a MAC address table, and they each forward Ethernet frames based on that MAC address table. Each switch probably has very similar configuration, but that configuration is separate, and all the functions are separate.

Now picture those same four access layer switches physically, not in Figure 6-13, but as you would imagine them in a wiring closet, even in the same rack. In this case, imagine all four access switches sit in the same rack in the same closet. All the wiring on that floor of the building runs back to the wiring closet, and each cable is patched into some port in one of these four switches. Each switch might be one rack unit (RU) tall (1.75 inches), and they all sit one on top of the other.

Switch Stacking of Access Layer Switches

The scenario described so far is literally a stack of switches one above the other. Switch stacking technology allows the network engineer to make that stack of physical switches act like one switch. For instance, if a switch stack was made from the four switches in Figure 6-13, the following would apply:

The stack would have a single management IP address.

The engineer would connect with Telnet or SSH to one switch (with that one management IP address), not multiple switches.

One configuration file would include all interfaces in all four physical switches.

STP, CDP, VTP would run on one switch, not multiple switches.

The switch ports would appear as if all are on the same switch.

There would be one MAC address table, and it would reference all ports on all physical switches.

The list could keep going much longer for all possible switch features, but the point is that switch stacking makes the switches act as if they are simply parts of a single larger switch.

To make that happen, the switches must be connected together with a special network. The network does not use standard Ethernet ports. Instead, the switches have special hardware ports called stacking ports. With the Cisco FlexStack and FlexStack-Plus stacking technology, a stacking module must be inserted into each switch, and then connected with a stacking cable.

NOTE: Cisco has created a few switch stacking technologies over the years, so to avoid having to refer to them all, note that this section describes Cisco’s FlexStack and FlexStack Plus options. These stacking technologies are supported to different degrees in the popular 2960-S, 2960-X, and 2960-XR switch families.

The stacking cables together make a ring between the switches as shown in Figure 6-14. That is, the switches connect in series, with the last switch connecting again to the first. Using full duplex on each link, the stacking modules and cables create two paths to forward data between the physical switches in the stack. The switches use these connections to communicate between the switches to forward frames and to perform other overhead functions.

Stacking Cables Between Access Switches in the Same Rack

Note that each stacking module has two ports with which to connect to another switch’s stacking module. For instance, if the four switches were all 2960XR switches, each would need one stacking module, and four cables total to connect the four switches as shown. Figure 6-15 shows the same idea in Figure 6-14, but as a photo that shows the stacking cables on the left side of the figure.

Photo of Four 2960X Switches Cabled on the Left with Stacking Cables

You should think of switch stacks as literally a stack of switches in the same rack. The stacking cables are short, with the expectation that the switches sit together in the same room and rack. For instance, Cisco offers stacking cables of .5, 1, and 3 meters long for the FlexStack and FlexStack-Plus stacking technology discussed in more depth at the end of this section.

Switch Stack Operation as a Single Logical Switch

With a switch stack, the switches together act as one logical switch. This term (logical switch) is meant to emphasize that there are obviously physical switches, but they act together as one switch.

To make it all work, one switch acts as a stack master to control the rest of the switches. The links created by the stacking cables allow the physical switches to communicate, but the stack master is in control of the work. For instance, if you number the physical switches as 1, 2, 3, and 4, a frame might arrive on switch 4 and need to exit a link on switch 3. If switch 1 were the stack master, switches 1, 3, and 4 would all need to communicate over the stack links to forward that frame. But switch 1, as stack master, would do the matching of the MAC address table to choose where to forward the frame.

Figure 6-16 focuses on the LAN design impact of how a switch stack acts like one logical switch. The figure shows the design with no changes to the cabling between the four access switches and the distribution switches. Formerly, each separate access switch had two links to the distribution layer: one connected to each distribution switch (see Figure 6-13). That cabling is unchanged. However, acting as one logical switch, the switch stack now operates as if it is one switch, with four uplinks to each distribution switch. Add a little configuration to put each set of four links into an EtherChannel, and you have the design shown in Figure 6-16.

Stack Acts Like One Switch

The stack also simplifies operations. Imagine for instance the scope of an STP topology for a VLAN that has access ports in all four of the physical access switches in this example. That Spanning Tree would include all six switches. With the switch stack acting as one logical switch, that same VLAN now has only three switches in the STP topology, and is much easier to understand and predict.

Cisco FlexStack and FlexStack-Plus

Just to put a finishing touch on the idea of a switch stack, this closing topic examines a few particulars of Cisco’s FlexStack and FlexStack-Plus stacking options.

Cisco’s stacking technologies require that Cisco plan to include stacking as a feature in a product, given that it requires specific hardware. Cisco has a long history of building new model series of switches with model numbers that begin with 2960. Per Cisco’s documentation, Cisco created one stacking technology, called FlexStack, as part of the introduction of the 2960-S model series. Cisco later enhanced FlexStack with FlexStack-Plus, adding support with products in the 2960-X and 2960-XR model series. For switch stacking to support future designs, the stacking hardware tends to increase over time as well, as seen in the comparisons between FlexStack and FlexStack-Plus in Table 6-3.

Comparisons of Cisco’s FlexStack and FlexStack-Plus Options

Chassis Aggregation

The term chassis aggregation refers to another Cisco technology used to make multiple switches operate as a single switch. From a big picture perspective, switch stacking is more often used and offered by Cisco in switches meant for the access layer. Chassis aggregation is meant for more powerful switches that sit in the distribution and core layers. Summarizing some of the key differences, chassis aggregation

Typically is used for higher-end switches used as distribution or core switches

Does not require special hardware adapters, instead using Ethernet interfaces

Aggregates two switches

Arguably is more complex but also more functional

The big idea of chassis aggregation is the same as for a switch stack: make multiple switches act like one switch, which gives you some availability and design advantages. But much of the driving force behind chassis aggregation is about high-availability design for LANs. This section works through a few of those thoughts to give you the big ideas about the thinking behind high availability for the core and distribution layer.

NOTE: This section looks at the general ideas of chassis aggregation, but for further reading about a specific implementation, search at Cisco.com for Cisco’s Virtual Switching System (VSS) that is supported on 6500 and 6800 series switches.

High Availability with a Distribution/Core Switch

Even without chassis aggregation, the distribution and core switches need to have high availability. The next few pages look at how the switches built for use as distribution and core switches can help improve availability, even without chassis aggregation.

If you were to look around a medium to large enterprise campus LAN, you would typically find many more access switches than distribution and core switches. For instance, you might have four access switches per floor, with ten floors in a building, for 40 access switches. That same building probably has only a pair of distribution switches.

And why two distribution switches instead of one? Because if the design used only one distribution switch, and it failed, none of the devices in the building could reach the rest of the network. So, if two distribution switches are good, why not four? Or eight? One reason is cost, another complexity. Done right, a pair of distribution switches for a building can provide the right balance of high availability and low cost/complexity.

The availability features of typical distribution and core switches allow network designers to create a great availability design with just two distribution or core switches. Cisco makes typical distribution/core switches with more redundancy. For instance, Figure 6-17 shows a representation of a typical chassis-based Cisco switch. It has slots that can be used for line cards—that is, cards with Ethernet ports. It has dual supervisor cards that do frame and packet forwarding. And it has two power supplies, each of which can be connected to power feeds from different electrical substations if desired.

Common Line-Card Arrangement in a Modular Cisco Distribution/Core Switch

Now imagine two distribution switches sitting beside each other in a wiring closet as shown in Figure 6-18. A design would usually connect the two switches with an EtherChannel. For better availability, the EtherChannel could use ports from different line cards, so that if one line card failed due to some hardware problem, the EtherChannel would still work.

Using EtherChannel and Different Line Cards

Improving Design and Availability with Chassis Aggregation

Next, consider the effect of adding chassis aggregation to a pair of distribution switches. In terms of effect, the two switches act as one switch, much like switch stacking. The particulars of how chassis aggregation achieves that differs.

Figure 6-19 shows a comparison. On the left, the two distribution switches act independently, and on the right, the two distribution switches are aggregated. In both cases, each distribution switch connects with a single Layer 2 link to the access layer switches A1 and A2, which act independently—that is, they do not use switch stacking. So, the only difference between the left and right examples is that on the right the distribution switches use switch aggregation.

One Design Advantage of Aggregated Distribution Switches

The right side of the figure shows the aggregated switch that appears as one switch to the access layer switches. In fact, even though the uplinks connect into two different switches, they can be configured as an EtherChannel through a feature called Multichassis EtherChannel (MEC).

The following list describes some of the advantages of using switch aggregation. Note that many of the benefits should sound familiar from the switch stacking discussion. The one difference in this list has to do with the active/active data plane.

Multichassis EtherChannel (MEC): Uses the EtherChannel between the two physical switches.

Active/Standby Control Plane: Simpler operation for control plane because the pair acts as one switch for control plane protocols: STP, VTP, EtherChannel, ARP, routing protocols.

Active/Active data plane: Takes advantage of forwarding power of supervisors on both switches, with active Layer 2 and Layer 3 forwarding the supervisors of both switches. The switches synchronize their MAC and routing tables to support that process.

Single switch management: Simpler operation of management protocols by running management protocols (Telnet, SSH, SNMP) on the active switch; configuration is synchronized automatically with the standby switch.

Finally, using chassis aggregation and switch stacking together in the same network has some great design advantages. Look back to Figure 6-13 at the beginning of this section. It showed two distribution switches and four access switches, all acting independently, with one uplink from each access switch to each distribution switch. If you enable switch stacking for the four access switches, and enable chassis aggregation for the two distribution switches, you end up with a topology as shown in Figure 6-20.

Making Six Switches Act like Two

INCLW01 Week 1 Practice Quiz

INTERNOLD NETWORKS CCNA LIVE WEBCLASS (INCLW)

week 1 practice quiz

INCLW01 Week 2 Module

INTERNOLD NETWORKS CCNA LIVE WEBCLASS (INCLW)

week 2 module

Video Lessons

Video 1: Introduction

Video 2: CCNA Exam Topics Overview

Video 3: Networking Layers Review

Video 4: Data Encapsulation Review

Video 5: How To on UTP Cabling (see other video below)

Video 6: Device and What Cable to Use

Video 7: Data Link Behavior

Video 8: MAC Address Review

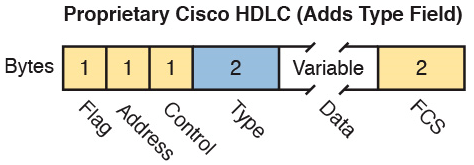

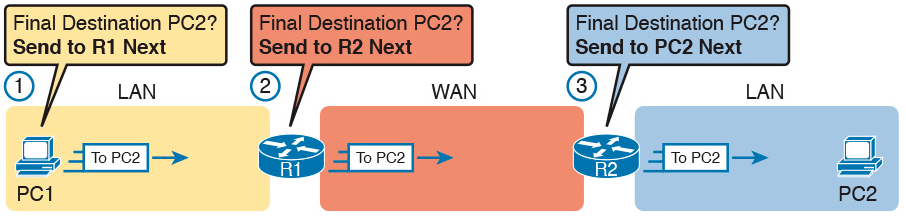

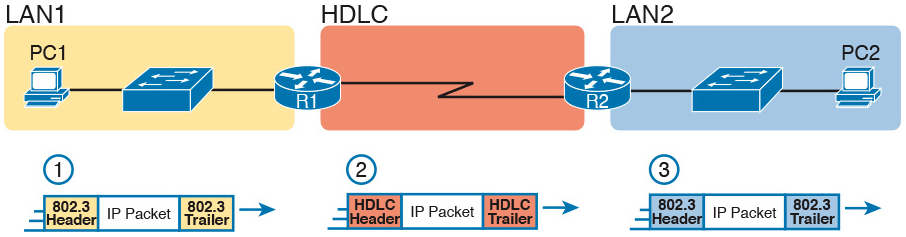

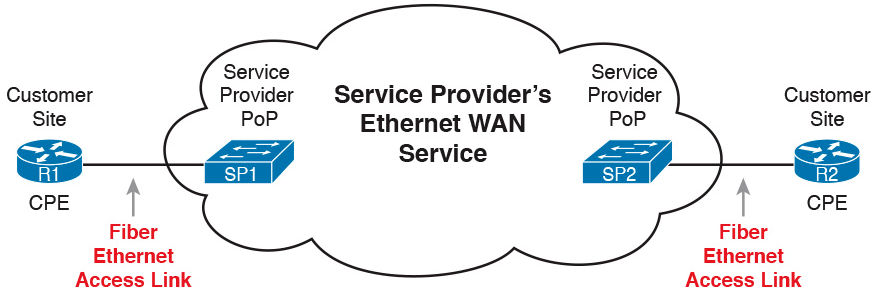

Video 9: Type Field and FCS

Video 10: Full-/Half-Duplex & Collision

Video 11: WAN Concepts

Video 12: WAN Links

Video 13: HDLC - Leased Lines

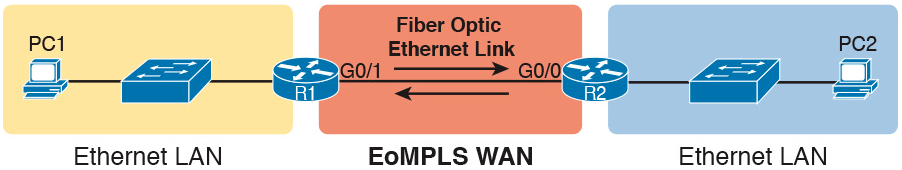

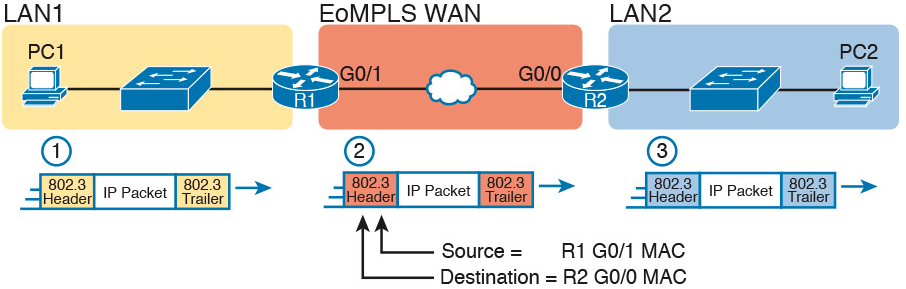

Video 14: Ethernet WAN

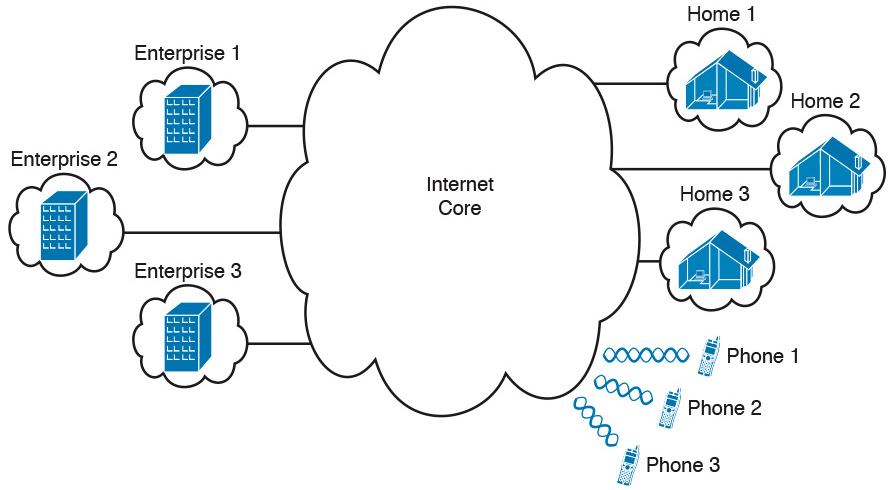

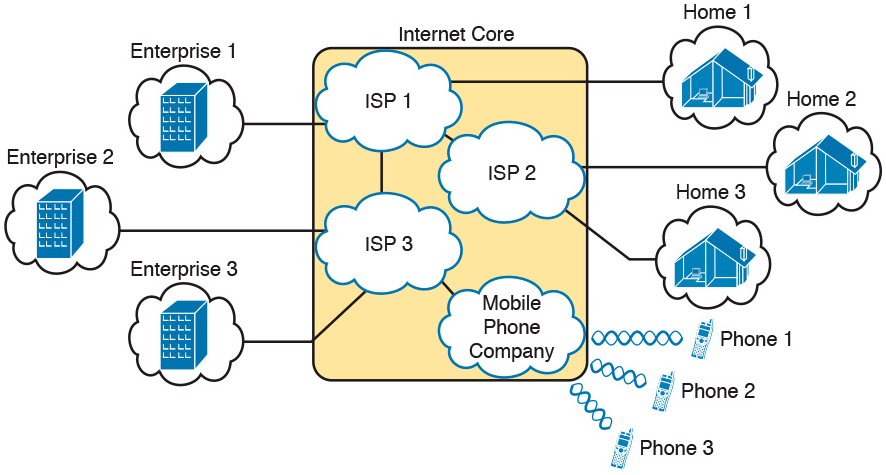

Video 15: Internet Core

Video 16: IP Packet

Video 17: IP Address and Classes

Video 18: IP Subnetting

Video 19: Default Gateway

Other Videos

Video 1: How to Fabricate UTP Cable 1

Video 2: How to Fabricate UTP Cable 2

Lab Setup Videos

Video 1: Lab Setup Introduction

Video 2: Download .ovpn via WinSCP

Video 3: IOU and IOS Upload

Video 4: Resolving Issue on Not Executable IOU Devices

Video 5: Lab Topology and SuperPutty Setup

Text Resources

Practice Quizzes

VLAN Trunking Protocol

INTERNOLD NETWORKS CCNA LIVE WEBCLASS (INCLW)

VLAN Trunking Protocol

VLAN Trunking Protocol

Engineers sometimes have a love/hate relationship with VLAN Trunking Protocol (VTP). VTP serves a useful purpose, distributing the configuration of the [no] vlan vlan-id command among switches. As a result, the engineer configures the vlan command on one switch, and all the rest of the switches are automatically configured with that same command.

Unfortunately, the automated update powers of VTP can also be dangerous. For example, an engineer could delete a VLAN on one switch, not realizing that the command actually deleted the VLAN on all switches. And deleting a VLAN impacts a switch’s forwarding logic: Switches do not forward frames for VLANs that are not defined to the switch.

This chapter discusses VTP, from concept through troubleshooting. The first major section discusses VTP concepts, while the second section shows how to configure and verify VTP. The third section walks through troubleshooting, with some discussion of the risks that cause some engineers to just not use VTP. (In fact, the entirety of the ICND1 Cert Guide’s discussion of VLAN configuration assumes VTP uses the VTP transparent mode, which effectively disables VTP from learning and advertising VLAN configuration.)

As for exam topics, note that the Cisco exam topics that mention VTP also mention DTP. Chapter 1, “Implementing Ethernet Virtual LANs,” discussed how Dynamic Trunking Protocol (DTP) is used to negotiate VLAN trunking. This chapter does not discuss DTP, leaving that topic for Chapter 1.

VLAN Trunking Protocol (VTP) Concepts

The Cisco-proprietary VLAN Trunking Protocol (VTP) provides a means by which Cisco switches can exchange VLAN configuration information. In particular, VTP advertises about the existence of each VLAN based on its VLAN ID and the VLAN name.

This first major section of the chapter discusses the major features of VTP in concept, in preparation for the VTP implementation (second section) and VTP troubleshooting (third section).

Basic VTP Operation

Think for a moment about what has to happen in a small network of four switches when you need to add two new hosts, and to put those hosts in a new VLAN that did not exist before. Figure 5-1 shows some of the main configuration concepts.

First, remember that for a switch to be able to forward frames in a VLAN, that VLAN must be defined on that switch. In this case, Step 1 shows the independent configuration of VLAN 10 on the four switches: the two distribution switches and the two access layer switches. With the rules discussed in Chapter 1 (which assumed VTP transparent mode, by the way), all four switches need to be configured with the vlan 10 command.

Step 2 shows the additional step to configure each access port to be in VLAN 10 as per the design. That is, in addition to creating the VLAN, the individual ports need to be added to the VLAN, as shown for servers A and B with the switchport access vlan 10 command.

VTP, when used for its intended purpose, would allow the engineer to create the VLAN (the vlan 10 command) on one switch only, with VTP then automatically updating the configuration of the other switches.

VTP defines a Layer 2 messaging protocol that the switches can use to exchange VLAN configuration information. When a switch changes its VLAN configuration—including the vlan vlan-id command—VTP causes all the switches to synchronize their VLAN configuration to include the same VLAN IDs and VLAN names. The process is somewhat like a routing protocol, with each switch sending periodic VTP messages. However, routing protocols advertise information about the IP network, whereas VTP advertises VLAN configuration.

Figure 5-2 shows one example of how VTP works in the same scenario used for Figure 5-1. Figure 5-2 starts with the need for a new VLAN 10, and two servers to be added to that VLAN. At Step 1, the network engineer creates the VLAN with the vlan 10 command on switch SW1. SW1 then uses VTP to advertise that new VLAN configuration to the other switches, as shown at Step 2; note that the other three switches do not need to be configured with the vlan 10 command. At Step 3, the network engineer still must configure the access ports with the switchport access vlan 10 command, because VTP does not advertise the interface and access VLAN configuration.

Distributing the vlan 10 Command with VTP

Synchronizing the VTP Database

To use VTP to announce and/or learn VLAN configuration information, a switch must use either VTP server mode or client mode. The third VTP mode, transparent mode, tells a switch to not learn VLAN configuration and to not advertise VLAN configuration, effectively making a VTP transparent mode switch act as if it were not there, at least for the purposes of VTP. This next topic works through the mechanisms used by switches acting as either VTP server or client.

VTP servers allow the network engineer to create VLANs (and other related commands) from the CLI, whereas VTP clients do not allow the network engineer to create VLANs. You have seen many instances of the vlan vlan-id command at this point in your study, the command that creates a new VLAN in a switch. VTP servers are allowed to continue to use this command to create VLANs, but switches placed in VTP client mode reject the vlan vlan-id command, because VTP client switches cannot create VLANs.

With that main difference in mind, VTP servers allow the creation of VLANs (and related configuration) via the usual commands. The server then advertises that configuration information over VLAN trunks. The overall flow works something like this

1. For each trunk, send VTP messages, and listen to receive them.

2. Check my local VTP parameters versus the VTP parameters announced in the VTP messages received on a trunk.

3. If the VTP parameters match, attempt to synchronize the VLAN configuration databases between the two switches.

NOTE: The name VLAN Trunking Protocol is based on the fact that this protocol works specifically over VLAN trunks, as noted in item 1 in this list.

Done correctly, VTP causes all the switches in the same administrative VTP domain—the set of switches with the same domain name and password—to converge to have the exact same configuration of VLAN information. Over time, each time the VLAN configuration is changed on any VTP server, all other switches in the VTP automatically learn of those configuration changes.

VTP does not think of the VLAN configuration as lots of small pieces of information, but rather as one VLAN configuration database. The configuration database has a configuration revision number which is incremented by 1 each time a server changes the VLAN configuration. The VTP synchronization process hinges on the idea of making sure each switch uses the VLAN configuration database that has the best (highest) revision number.

Figure 5-3 begins an example that demonstrates how the VLAN configuration database revision numbers work. At the beginning of the example, all the switches have converged to use the VLAN database that uses revision number 3. The example then shows:

1. The network engineer defines a new VLAN with the vlan 10 command on switch SW1.

2. SW1, a VTP server, changes the VTP revision number for its own VLAN configuration database from 3 to 4.

3. SW1 sends VTP messages over the VLAN trunk to SW2 to begin the process of telling SW2 about the new VTP revision number for the VLAN configuration database.

At this point, only switch SW1 has the best VLAN configuration database with the highest revision number (4). Figure 5-4 shows the next few steps, picking up the process where Figure 5-3 stopped. Upon receiving the VTP messages from SW1, as shown in Step 3 of Figure 5-3, at Step 4 in Figure 5-4, SW2 starts using that new LAN database. Step 5 emphasizes the fact that as a result, SW2 now knows about VLAN 10. SW2 then sends VTP messages over the trunk to the next switch, SW3 (Step 6).

With VTP working correctly on all four switches, all the switches will eventually use the exact same configuration, with VTP revision number 4, as advertised with VTP.

Figure 5-4 also shows a great example of one key similarity between VTP clients and servers: both will learn and update their VLAN database from VTP messages received from another switch. Note that the process shown in Figures 5-3 and 5-4 works the same whether switches SW2, SW3, and SW4 are VTP clients or servers, in any combination. In this scenario, the only switch that must be a VTP server is switch SW1, where the vlan 10 command was configured; a VTP client would have rejected the command.

For instance, in Figure 5-5, imagine switches SW2 and SW4 were VTP clients, but switch SW3 was a VTP server. With the same scenario discussed in Figures 5-3 and 5-4, the new VLAN configuration database is propagated just as described in those earlier figures, with SW2 (client), SW3 (server), and SW4 (client) all learning of and using the new database with revision number 4.

NOTE: The complete process by which a server changes the VLAN configuration and all VTP switches learn the new configuration, resulting in all switches knowing the same VLAN IDs and name, is called VTP synchronization.

After VTP synchronization is completed, VTP servers and clients also send periodic VTP messages every 5 minutes. If nothing changes, the messages keep listing the same VLAN database revision number, and no changes occur. Then when the configuration changes in one of the VTP servers, that switch increments its VTP revision number by 1, and its next VTP messages announce a new VTP revision number, so that the entire VTP domain (clients and servers) synchronize to use the new VLAN database.

Requirements for VTP to Work Between Two Switches

When a VTP client or server connects to another VTP client or server switch, Cisco IOS requires that the following three facts be true before the two switches will process VTP messages received from the neighboring switch:

The link between the switches must be operating as a VLAN trunk (ISL or 802.1Q).

The two switches’ case-sensitive VTP domain name must match.

If configured on at least one of the switches, both switches must have configured the same case-sensitive VTP password.

The VTP domain name provides a design tool by which engineers can create multiple groups of VTP switches, called VTP domains, whose VLAN configurations are autonomous. To do so, the engineer can configure one set of switches in one VTP domain and another set in another VTP domain. Switches in one domain will ignore VTP messages from switches in the other domain, and vice versa.

The VTP password mechanism provides a means by which a switch can prevent malicious attackers from forcing a switch to change its VLAN configuration. The password itself is never transmitted in clear text.

VTP Version 1 Versus Version 2

Cisco supports three VTP versions, aptly numbered versions 1, 2, and 3. Interestingly, the current ICND2/CCNA exam topics mention versions 1 and 2 specifically, but omit version 3. Version 3 adds more capabilities and features beyond versions 1 and 2, and as a result is a little more complex. Versions 1 and 2 are relatively similar, with version 2 updating version 1 to provide some specific feature updates. For example, version 2 added support for a type of LAN called Token Ring, but Token Ring is no longer even found in Cisco’s product line.

For the purposes of configuring, verifying, and troubleshooting VTP today, versions 1 and 2 have no meaningful difference. For instance, two switches can be configured as VTP servers, one using VTP version 1 and one using VTP version 2, and they do exchange VTP messages and learn from each other.

The one difference between VTP versions 1 and 2 that might matter has to do with the behavior of a VTP transparent mode switch. By design, VTP transparent mode is meant to allow a switch to be configured to not synchronize with other switches, but to also pass the VTP messages to VTP servers and clients. That is, the transparent mode switch is transparent to the intended purpose of VTP: servers and clients synchronizing. One of the requirements for transparent mode switches to forward the VTP messages sent by servers and clients is that the VTP versions must match.

VTP Pruning

By default, Cisco IOS on LAN switches allows frames in all configured VLANs to be passed over a trunk. Switches flood broadcasts (and unknown destination unicasts) in each active VLAN out these trunks.

However, using VTP can cause too much flooded traffic to flow into parts of the network. VTP advertises any new VLAN configured in a VTP server to the other server and client switches in the VTP domain. However, when it comes to frame forwarding, there may not be any need to flood frames to all switches, because some switches may not connect to devices in a particular VLAN. For example, in a campus LAN with 100 switches, all the devices in VLAN 50 may exist on only 3 to 4 switches. However, if VTP advertises VLAN 50 to all the switches, a broadcast in VLAN 50 could be flooded to all 100 switches.

One solution to manage the flow of broadcasts is to manually configure the allowed VLAN lists on the various VLAN trunks. However, doing so requires a manual configuration process. A better option might be to allow VTP to dynamically determine which switches do not have access ports in each VLAN, and prune (remove) those VLANs from the appropriate trunks to limit flooding. VTP pruning simply means that the appropriate switch trunk interfaces do not flood frames in that VLAN.

NOTE: The section “Mismatched Supported VLAN List on Trunks” in Chapter 4, “LAN Troubleshooting,” discusses the various reasons why a switch trunk does not forward frames in a VLAN, including the allowed VLAN list. That section also briefly references VTP pruning.

Figure 5-6 shows an example of VTP pruning, showing a design that makes the VTP pruning feature more obvious. In this figure, two VLANs are used: 10 and 20. However, only switch SW1 has access ports in VLAN 10, and only switches SW2 and SW3 have access ports in VLAN 20. With this design, a frame in VLAN 20 does not need to be flooded to the left to switch SW1, and a frame in VLAN 10 does not need to be flooded to the right to switches SW2 and SW3.

VTP Pruning Example

Figure 5-6 shows two steps that result in VTP pruning VLAN 10 from SW2’s G0/2 trunk:

Step 1. SW1 knows about VLAN 20 from VTP, but switch SW1 does not have access ports in VLAN 20. So SW1 announces to SW2 that SW1 would like to prune VLAN 20, so that SW1 no longer receives data frames in VLAN 20.

Step 2. VTP on switch SW2 prunes VLAN 20 from its G0/2 trunk. As a result, SW2 will no longer flood VLAN 20 frames out trunk G0/2 to SW1.

VTP pruning increases the available bandwidth by restricting flooded traffic. VTP pruning is one of the two most compelling reasons to use VTP, with the other reason being to make VLAN configuration easier and more consistent.

Summary of VTP Features

Table 5-2 offers a comparative overview of the three VTP modes.

VTP Features

VTP Configuration and Verification

VTP configuration requires only a few simple steps, but VTP has the power to cause significant problems, either by accidental poor configuration choices or by malicious attacks. This second major section of the chapter focuses on configuring VTP correctly and verifying its operation. The third major section then looks at troubleshooting VTP, which includes being careful to avoid harmful scenarios.

Using VTP: Configuring Servers and Clients

Before configuring VTP, the network engineer needs to make some choices. In particular, assuming that the engineer wants to make use of VTP’s features, the engineer needs to decide which switches will be in the same VTP domain, meaning that these switches will learn VLAN configuration information from each other. The VTP domain name must be chosen, along with an optional but recommended VTP password. (Both the domain name and password are case sensitive.) The engineer must also choose which switches will be servers (usually at least two for redundancy) and which will be clients.

After the planning steps are completed, the following steps can be used to configure VTP:

Step 1. Use the vtp mode {server | client} command in global configuration mode to enable VTP on the switch as either a server or client.

Step 2. On both clients and servers, use the vtp domain domain-name command in global configuration mode to configure the case-sensitive VTP domain name.

Step 3. (Optional) On both clients and servers, use the vtp password password-value command in global configuration mode to configure the case-sensitive password.

Step 4. (Optional) On servers, use the vtp pruning global configuration command to make the domain-wide VTP pruning choice.

Step 5. (Optional) On both clients and servers, use the vtp version {1 | 2} command in global configuration mode to tell the local switch whether to use VTP version 1 or 2.

As a network to use in the upcoming configuration examples, Figure 5-7 shows a LAN with the current VTP settings on each switch. At the beginning of the example in this section, both switches have all default VTP configuration: VTP server mode with a null domain name and password. With these default settings, even if the link between two switches is a trunk, VTP would still not work.

Per the figure, the switches do have some related configuration beyond the VTP configuration. SW1 has been configured to know about two additional VLANs (VLAN 2 and 3). Additionally, both switches have been configured with IP addresses—a fact that will be useful in upcoming show command output.

To move toward using VTP in these switches, and synchronizing their VLAN configuration databases, Figure 5-8 repeats Figure 5-7, but with some new configuration settings in bold text. Note that both switches now use the same VTP domain name and password. Switch SW1 remains at the default setting of being a VTP server, while switch SW2 is now configured to be a VTP client. Now, with matching VTP domain and password, and with a trunk between the two switches, the two switches will use VTP successfully.

Example 5-1 shows the configuration shown in Figure 5-8 as added to each switch.

Example 5-1 Basic VTP Client and Server Configuration

Make sure and take the time to work through the configuration commands on both switches. The domain name and password, case-sensitive, match. Also, SW2, as client, does not need the vtp pruning command, because the VTP server dictates to the domain whether or not pruning is used throughout the domain. (Note that all VTP servers should be configured with the same VTP pruning setting.)

Verifying Switches Synchronized Databases

Configuring VTP takes only a little work, as shown in Example 5-1. Most of the interesting activity with VTP happens in what it learns dynamically, and how VTP accomplishes that learning. For instance, Figure 5-7 showed the switch SW1 had revision number 5 for its VLAN configuration database, while SW2’s was revision 1. Once configured as shown in Example 5-1, the following logic happened through an exchange of VTP messages between SW1 and SW2:

1. SW1 and SW2 exchanged VTP messages.

2. SW2 realized that its own revision number (1) was lower (worse) than SW1’s revision number 5.

3. SW2 received a copy of SW1’s VLAN database and updated SW2’s own VLAN (and related) configuration.

4. SW2’s revision number also updated to revision number 5.