Fundamentals of Ethernet LANs

INTERNOLD NETWORKS CCNA LIVE WEBCLASS (INCLW)

Fundamentals of Ethernet LANs

Fundamentals of Ethernet LANs

Most enterprise computer networks can be separated into two general types of technology: local-area networks (LAN) and wide-area networks (WAN).

LANs typically connect nearby devices: devices in the same room, in the same building, or in a campus of buildings.

In contrast, WANs connect devices that are typically relatively far apart.

Together, LANs and WANs create a complete enterprise computer network, working together to do the job of a computer network: delivering data from one device to another

Many types of LANs have existed over the years, but today’s networks use two general types of LANs: Ethernet LANs and wireless LANs.

Ethernet LANs happen to use cables for the links between nodes, and because many types of cables use copper wires, Ethernet LANs are often called wired LANs.

In comparison, wireless LANs do not use wires or cables, instead using radio waves for the links between nodes.

Overview of LANs

The term Ethernet refers to a family of LAN standards that together define the physical and data link layers of the world’s most popular wired LAN technology.

The standards, defined by the Institute of Electrical and Electronics Engineers (IEEE), define the cabling, the connectors on the ends of the cables, the protocol rules, and everything else required to create an Ethernet LAN.

SOHO LANs

To begin, first think about a small office/home office (SOHO) LAN today, specifically a LAN that uses only Ethernet LAN technology.

First, the LAN needs a device called an Ethernet LAN switch, which provides many physical ports into which cables can be connected.

An Ethernet uses Ethernet cables, which is a general reference to any cable that conforms to any of several Ethernet standards.

The LAN uses Ethernet cables to connect different Ethernet devices or nodes to one of the switch’s Ethernet ports.

Below diagram shows a drawing of a SOHO Ethernet LAN. The figure shows a single LAN switch, five cables, and five other Ethernet nodes: three PCs, a printer, and one network device called a router. (The router connects the LAN to the WAN, in this case to the Internet.)

Small Ethernet-only SOHO LAN

Although above diagram shows a simple Ethernet LAN, many SOHO Ethernet LANs today combine the router and switch into a single device.

Vendors sell consumer-grade integrated networking devices that work as a router and Ethernet switch, as well as doing other functions.

These devices typically have “router” on the packaging, but many models also have four-port or eight-port Ethernet LAN switch ports built in to the device

Typical SOHO LANs today also support wireless LAN connections.

Ethernet defines wired LAN technology only; in other words, Ethernet LANs use cables.

However, you can build one LAN that uses both Ethernet LAN technology as well as wireless LAN technology, which is also defined by the IEEE.

Wireless LANs, defined by the IEEE using standards that begin with 802.11, use radio waves to send the bits from one node to the next.

Most wireless LANs rely on yet another networking device: a wireless LAN access point (AP).

The AP acts somewhat like an Ethernet switch, in that all the wireless LAN nodes communicate with the Ethernet switch by sending and receiving data with the wireless AP.

Of course, as a wireless device, the AP does not need Ethernet ports for cables, other than for a single Ethernet link to connect the AP to the Ethernet LAN, as shown below.

Note that this diagram shows the router, Ethernet switch, and wireless LAN access point as three separate devices so that you can better understand the different roles.

However, most SOHO networks today would use a single device, often labeled as a “wireless router,” that does all these functions.

Enterprise LANs

Enterprise networks have similar needs compared to a SOHO network, but on a much larger scale.

For example, enterprise Ethernet LANs begin with LAN switches installed in a wiring closet behind a locked door on each floor of a building.

The electricians install the Ethernet cabling from that wiring closet to cubicles and conference rooms where devices might need to connect to the LAN.

At the same time, most enterprises also support wireless LANs in the same space, to allow people to roam around and still work and to support a growing number of devices that do not have an Ethernet LAN interface.

Below is a conceptual view of a typical enterprise LAN in a three-story building.

Each floor has an Ethernet LAN switch and a wireless LAN AP.

To allow communication between floors, each per-floor switch connects to one centralized distribution switch.

For example, PC3 can send data to PC2, but it would first flow through switch SW3 to the first floor to the distribution switch (SWD) and then back up through switch SW2 on the second floor.

The figure also shows the typical way to connect a LAN to a WAN using a router. LAN switches and wireless access points work to create the LAN itself. Routers connect to both the LAN and the WAN.

To connect to the LAN, the router simply uses an Ethernet LAN interface and an Ethernet cable, as shown on the lower right of the diagram above.

Variety of Ethernet Physical Layer Standards

The term Ethernet refers to an entire family of standards.

Some standards define the specifics of how to send data over a particular type of cabling, and at a particular speed.

Other standards define protocols, or rules, that the Ethernet nodes must follow to be a part of an Ethernet LAN.

All these Ethernet standards come from the IEEE and include the number 802.3 as the beginning part of the standard name.

Ethernet supports a large variety of options for physical Ethernet links given its long history over the last 40 or so years.

Today, Ethernet includes many standards for different kinds of optical and copper cabling, and for speeds from 10 megabits per second (Mbps) up to 100 gigabits per second (Gbps).

The standards also differ as far as the types of cabling and the allowed length of the cabling.

The most fundamental cabling choice has to do with the materials used inside the cable for the physical transmission of bits: either copper wires or glass fibers.

The use of unshielded twisted-pair (UTP) cabling saves money compared to optical fibers, with Ethernet nodes using the wires inside the cable to send data over electrical circuits.

Fiber-optic cabling, the more expensive alternative, allows Ethernet nodes to send light over glass fibers in the center of the cable.

Although more expensive, optical cables typically allow longer cabling distances between nodes.

To be ready to choose the products to purchase for a new Ethernet LAN, a network engineer must know the names and features of the different Ethernet standards supported in Ethernet products. The IEEE defines Ethernet physical layer standards using a couple of naming conventions.

The formal name begins with 802.3 followed by some suffix letters.

The IEEE also uses more meaningful shortcut names that identify the speed, as well as a clue about whether the cabling is UTP (with a suffix that includes T) or fiber (with a suffix that includes X).

The table below lists a few Ethernet physical layer standards.

First, the table lists enough names so that you get a sense of the IEEE naming conventions.

It also lists the four most common standards that use UTP cabling, because this book’s discussion of Ethernet focuses mainly on the UTP options.

Consistent Behavior over All Links Using the Ethernet Data Link Layer

While the physical layer standards focus on sending bits over a cable, the Ethernet data-link protocols focus on sending an Ethernet frame from source to destination Ethernet node. From a data-link perspective, nodes build and forward frames. The term frame specifically refers to the header and trailer of a data-link protocol, plus the data encapsulated inside that header and trailer.

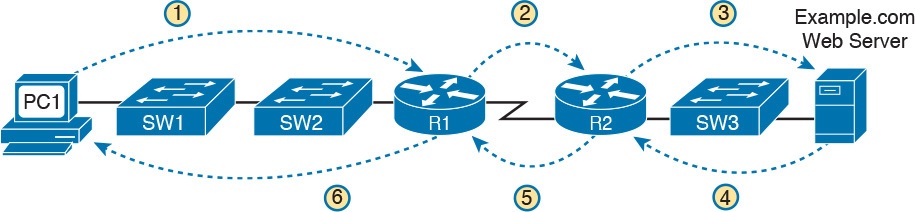

The diagram below shows an example of the process. In this case, PC1 sends an Ethernet frame to PC3. The frame travels over a UTP link to Ethernet switch SW1, then over fiber links to Ethernet switches SW2 and SW3, and finally over another UTP link to PC3. Note that the bits actually travel at four different speeds in this example: 10 Mbps, 1 Gbps, 10 Gbps, and 100 Mbps, respectively.

So, what is an Ethernet LAN? It is a combination of user devices, LAN switches, and different kinds of cabling. Each link can use different types of cables, at different speeds. However, they all work together to deliver Ethernet frames from the one device on the LAN to some other device.

Building Physical Ethernet Networks with UTP

Before the Ethernet network as a whole can send Ethernet frames between user devices, each node must be ready and able to send data over an individual physical link.

The three most commonly used Ethernet standards: 10BASE-T (Ethernet), 100BASE-T (Fast Ethernet, or FE), and 1000BASE-T (Gigabit Ethernet, or GE) which respectively uses the cables for 10-Mbps, 100-Mbps, and 1000-Mbps Ethernet.

Transmitting Data Using Twisted Pairs

While it is true that Ethernet sends data over UTP cables, the physical means to send the data uses electricity that flows over the wires inside the UTP cable.

To better understand how Ethernet sends data using electricity, break the idea down into two parts: how to create an electrical circuit and then how to make that electrical signal communicate 1s and 0s.

First, to create one electrical circuit, Ethernet defines how to use the two wires inside a single twisted pair of wires, as shown on the diagram below.

The diagram does not show a UTP cable between two nodes, but instead shows two individual wires that are inside the UTP cable.

An electrical circuit requires a complete loop, so the two nodes, using circuitry on their Ethernet ports, connect the wires in one pair to complete a loop, allowing electricity to flow.

Note that in an actual UTP cable, the wires will be twisted together, instead of being parallel.

The twisting helps solve some important physical transmission issues.

When electrical current passes over any wire, it creates electromagnetic interference (EMI) that interferes with the electrical signals in nearby wires, including the wires in the same cable. (EMI between wire pairs in the same cable is called crosstalk.)

Twisting the wire pairs together helps cancel out most of the EMI, so most networking physical links that use copper wires use twisted pairs.

Breaking Down a UTP Ethernet Link

The term Ethernet link refers to any physical cable between two Ethernet nodes.

To learn about how a UTP Ethernet link works, it helps to break down the physical link into those basic pieces, as shown below: the cable itself, the connectors on the ends of the cable, and the matching ports on the devices into which the connectors will be inserted.

Basic Components of an Ethernet Link

First, think about the UTP cable itself. The cable holds some copper wires, grouped as twisted pairs.

The 10BASE-T and 100BASE-T standards require two pairs of wires, while the 1000BASE-T standard requires four pairs.

Each wire has a color-coded plastic coating, with the wires in a pair having a color scheme.

For example, for the blue wire pair, one wire’s coating is all blue, while the other wire’s coating is blue-and-white striped.

Many Ethernet UTP cables use an RJ-45 connector on both ends.

The RJ-45 connector has eight physical locations into which the eight wires in the cable can be inserted, called pin positions, or simply pins.

These pins create a place where the ends of the copper wires can touch the electronics inside the nodes at the end of the physical link so that electricity can flow.

To complete the physical link, the nodes each need an RJ-45 Ethernet port that matches the RJ-45 connectors on the cable so that the connectors on the ends of the cable can connect to each node.

Computers often include this RJ-45 Ethernet port as part of a network interface card (NIC), which can be an expansion card on the PC or can be built in to the system itself. Switches typically have many RJ-45 ports because switches give user devices a place to connect to the Ethernet LAN.

RJ-45 Connectors and Ports

The diagram above shows a connector on the left and ports on the right. The left shows the eight pin positions in the end of the RJ-45 connector. The upper right shows an Ethernet NIC that is not yet installed in a computer.

The lower-right part of the figure shows the side of a Cisco 2960 switch, with multiple RJ-45 ports, allowing multiple devices to easily connect to the Ethernet network.

Finally, while RJ-45 connectors with UTP cabling can be common, Cisco LAN switches often support other types of connectors as well. When you buy one of the many models of Cisco switches, you need to think about the mix and numbers of each type of physical ports you want on the switch.

To give its customers flexibility as to the type of Ethernet links, even after the customer has bought the switch, Cisco switches include some physical ports whose port hardware (the transceiver) can be changed later, after you purchase the switch.

For example, below shows a photo of a Cisco switch with one of the swappable transceivers. In this case, the figure shows an enhanced small form-factor pluggable (SFP+) transceiver, which runs at 10 Gbps, just outside two SFP+ slots on a Cisco 3560CX switch. The SFP+ itself is the silver colored part below the switch, with a black cable connected to it.

UTP Cabling Pinouts for 10BASE-T and 100BASE-T

Straight-Through Cable Pinout

10BASE-T and 100BASE-T use two pairs of wires in a UTP cable, one for each direction, as shown in diagram below.

The figure shows four wires, all of which sit inside a single UTP cable that connects a PC and a LAN switch. In this example, the PC on the left transmits using the top pair, and the switch on the right transmits using the bottom pair.

For correct transmission over the link, the wires in the UTP cable must be connected to the correct pin positions in the RJ-45 connectors.

For example, in the diagram above, the transmitter on the PC on the left must know the pin positions of the two wires it should use to transmit. Those two wires must be connected to the correct pins in the RJ-45 connector on the switch, so that the switch’s receiver logic can use the correct wires.

To understand the wiring of the cable—which wires need to be in which pin positions on both ends of the cable—you need to first understand how the NICs and switches work.

As a rule, Ethernet NIC transmitters use the pair connected to pins 1 and 2; the NIC receivers use a pair of wires at pin positions 3 and 6. LAN switches, knowing those facts about what Ethernet NICs do, do the opposite: Their receivers use the wire pair at pins 1 and 2, and their transmitters use the wire pair at pins 3 and 6.

To allow a PC NIC to communicate with a switch, the UTP cable must also use a straight-through cable pinout.

The term pinout refers to the wiring of which color wire is placed in each of the eight numbered pin positions in the RJ-45 connector.

An Ethernet straight-through cable connects the wire at pin 1 on one end of the cable to pin 1 at the other end of the cable; the wire at pin 2 needs to connect to pin 2 on the other end of the cable; pin 3 on one end connects to pin 3 on the other, and so on.

Also, it uses the wires in one wire pair at pins 1 and 2, and another pair at pins 3 and 6.

The diagram below shows one final perspective on the straight-through cable pinout.

In this case, PC Larry connects to a LAN switch.

Note that the diagram again does not show the UTP cable, but instead shows the wires that sit inside the cable, to emphasize the idea of wire pairs and pins.

Crossover Cable Pinout

A straight-through cable works correctly when the nodes use opposite pairs for transmitting data.

However, when two like devices connect to an Ethernet link, they both transmit on the same pins.

In that case, you then need another type of cabling pinout called a crossover cable.

The crossover cable pinout crosses the pair at the transmit pins on each device to the receive pins on the opposite device.

This concept is much clearer with a diagram below.

The figure shows what happens on a link between two switches. The two switches both transmit on the pair at pins 3 and 6, and they both receive on the pair at pins 1 and 2. So, the cable must connect a pair at pins 3 and 6 on each side to pins 1 and 2 on the other side, connecting to the other node’s receiver logic. The top of the figure shows the literal pinouts, and the bottom half shows a conceptual diagram.

Choosing the Right Cable Pinouts

- Crossover cable: If the endpoints transmit on the same pin pair

- Straight-through cable: If the endpoints transmit on different pin pairs

The diagram below shows a LAN in a single building. In this case, several straight-through cables are used to connect PCs to switches. In addition, the cables connecting the switches require crossover cables.

NOTE: If you have some experience with installing LANs, you might be thinking that you have used the wrong cable before (straight-through or crossover) but the cable worked. Cisco switches have a feature called auto-mdix that notices when the wrong cable is used and automatically changes its logic to make the link work.

However, for the exams, be ready to identify whether the correct cable is shown in the diagrams.

UTP Cabling Pinouts for 1000BASE-T

1000BASE-T (Gigabit Ethernet) differs from 10BASE-T and 100BASE-T as far as the cabling and pinouts.

First, 1000BASE-T requires four wire pairs.

Second, it uses more advanced electronics that allow both ends to transmit and receive simultaneously on each wire pair.

However, the wiring pinouts for 1000BASE-T work almost identically to the earlier standards, adding details for the additional two pairs.

The straight-through cable connects each pin with the same numbered pin on the other side, but it does so for all eight pins—pin 1 to pin 1, pin 2 to pin 2, up through pin 8.

It keeps one pair at pins 1 and 2 and another at pins 3 and 6, just like in the earlier wiring. It adds a pair at pins 4 and 5 and the final pair at pins 7 and 8 as shown in the diagram below.

The Gigabit Ethernet crossover cable crosses the same two-wire pairs as the crossover cable for the other types of Ethernet (the pairs at pins 1,2 and 3,6). It also crosses the two new pairs as well (the pair at pins 4,5 with the pair at pins 7,8).

Sending Data in Ethernet Networks

Although physical layer standards vary quite a bit, other parts of the Ethernet standards work the same way, regardless of the type of physical Ethernet link.

Ethernet Data-Link Protocols

One of the most significant strengths of the Ethernet family of protocols is that these protocols use the same data-link standard.

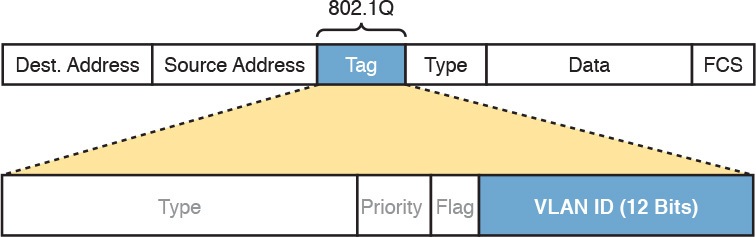

The Ethernet data-link protocol defines the Ethernet frame: an Ethernet header at the front, the encapsulated data in the middle, and an Ethernet trailer at the end as shown in the diagrams below with the description of each field.

Commonly Used Ethernet Frame Format

IEEE 802.3 Ethernet Header and Trailer Fields

Ethernet Addressing

The source and destination Ethernet address fields play a huge role in how Ethernet LANs work.

The general idea for each is relatively simple: The sending node puts its own address in the source address field and the intended Ethernet destination device’s address in the destination address field.

The sender transmits the frame, expecting that the Ethernet LAN, as a whole, will deliver the frame to that correct destination.

Ethernet addresses, also called Media Access Control (MAC) addresses, are 6-byte-long (48-bit-long) binary numbers.

MAC addresses as 12-digit hexadecimal numbers.

Cisco devices typically add some periods to the number for easier readability as well; for example, a Cisco switch might list a MAC address as 0000.0C12.3456.

Most MAC addresses represent a single NIC or other Ethernet port, so these addresses are often called a unicast Ethernet address.

The term unicast is simply a formal way to refer to the fact that the address represents one interface to the Ethernet LAN. (This term also contrasts with two other types of Ethernet addresses, broadcast and multicast, which will be defined later.)

The entire idea of sending data to a destination unicast MAC address works well, but it works only if all the unicast MAC addresses are unique.

If two NICs tried to use the same MAC address, there could be confusion.

If two PCs on the same Ethernet tried to use the same MAC address, to which PC should frames sent to that MAC address be delivered?

Ethernet solves this problem using an administrative process so that, at the time of manufacture, all Ethernet devices are assigned a universally unique MAC address.

Before a manufacturer can build Ethernet products, it must ask the IEEE to assign the manufacturer a universally unique 3-byte code, called the organizationally unique identifier (OUI).

The manufacturer agrees to give all NICs (and other Ethernet products) a MAC address that begins with its assigned 3-byte OUI.

The manufacturer also assigns a unique value for the last 3 bytes, a number that manufacturer has never used with that OUI. As a result, the MAC address of every device in the universe is unique.

The diagram below shows the structure of the unicast MAC address, with the OUI.

Structure of Unicast Ethernet Addresses

Ethernet addresses go by many names:

- LAN address

- Ethernet address

- hardware address

- burned-in address (BIA)

- physical address

- universal address

- MAC address.

The term burned-in address (BIA) refers to the idea that a permanent MAC address has been encoded (burned into) the ROM chip on the NIC.

As another example, the IEEE uses the term universal address to emphasize the fact that the address assigned to a NIC by a manufacturer should be unique among all MAC addresses in the universe.

Broadcast and Multicast Address

In addition to unicast addresses, Ethernet also uses group addresses.

Group addresses identify more than one LAN interface card. A frame sent to a group address might be delivered to a small set of devices on the LAN, or even to all devices on the LAN.

In fact, the IEEE defines two general categories of group addresses for Ethernet:

- Broadcast address: Frames sent to this address should be delivered to all devices on the Ethernet LAN. It has a value of FFFF.FFFF.FFFF.

- Multicast addresses: Frames sent to a multicast Ethernet address will be copied and forwarded to a subset of the devices on the LAN that volunteers to receive frames sent to a specific multicast address.

The diagram below summarizes most of the details about MAC addresses.

LAN MAC Address Terminology and Features

Identifying Network Layer Protocols with the Ethernet Type Field

While the Ethernet header’s address fields play an important and more obvious role in Ethernet LANs, the Ethernet Type field plays a much less obvious role.

The Ethernet Type field, or EtherType, sits in the Ethernet data link layer header, but its purpose is to directly help the network processing on routers and hosts.

Basically, the Type field identifies the type of network layer (Layer 3) packet that sits inside the Ethernet frame.

First, think about what sits inside the data part of the Ethernet frame shown in the diagram below.

Typically, it holds the network layer packet created by the network layer protocol on some device in the network.

Over the years, those protocols have included IBM Systems Network Architecture (SNA), Novell NetWare, Digital Equipment Corporation’s DECnet, and Apple Computer’s AppleTalk.

Today, the most common network layer protocols are both from TCP/IP: IP version 4 (IPv4) and IP version 6 (IPv6).

The original host has a place to insert a value (a hexadecimal number) to identify the type of packet encapsulated inside the Ethernet frame.

However, what number should the sender put in the header to identify an IPv4 packet as the type? Or an IPv6 packet?

As it turns out, the IEEE manages a list of EtherType values, so that every network layer protocol that needs a unique EtherType value can have a number.

The sender just has to know the list. (To view the complete list; just go to www.ieee.org and search for EtherType or click here.)

For example, a host can send one Ethernet frame with an IPv4 packet and the next Ethernet frame with an IPv6 packet.

Each frame would have a different Ethernet Type field value, using the values reserved by the IEEE, as shown in the diagram below.

Use of Ethernet Type Field

Error Detection with FCS

Ethernet also defines a way for nodes to find out whether a frame’s bits changed while crossing over an Ethernet link. (Usually, the bits could change because of some kind of electrical interference, or a bad NIC.)

Ethernet, like most data-link protocols, uses a field in the data-link trailer for the purpose of error detection.

The Ethernet Frame Check Sequence (FCS) field in the Ethernet trailer—the only field in the Ethernet trailer—gives the receiving node a way to compare results with the sender, to discover whether errors occurred in the frame.

The sender applies a complex math formula to the frame before sending it, storing the result of the formula in the FCS field.

The receiver applies the same math formula to the received frame. The receiver then compares its own results with the sender’s results. If the results are the same, the frame did not change; otherwise, an error occurred and the receiver discards the frame.

Note that error detection does not also mean error recovery.

Ethernet defines that the errored frame should be discarded, but Ethernet does not attempt to recover the lost frame.

Other protocols, notably TCP, recover the lost data by noticing that it is lost and sending the data again.

Sending Ethernet Frames with Switches and Hubs

Ethernet LANs behave slightly differently depending on whether the LAN has mostly modern devices, in particular, LAN switches instead of some older LAN devices called LAN hubs.

Basically, the use of more modern switches allows the use of full-duplex logic, which is much faster and simpler than half-duplex logic, which is required when using hubs.

Sending in Modern Ethernet LANs Using Full Duplex

Modern Ethernet LANs use a variety of Ethernet physical standards, but with standard Ethernet frames that can flow over any of these types of physical links.

Each individual link can run at a different speed, but each link allows the attached nodes to send the bits in the frame to the next node. They must work together to deliver the data from the sending Ethernet node to the destination node.

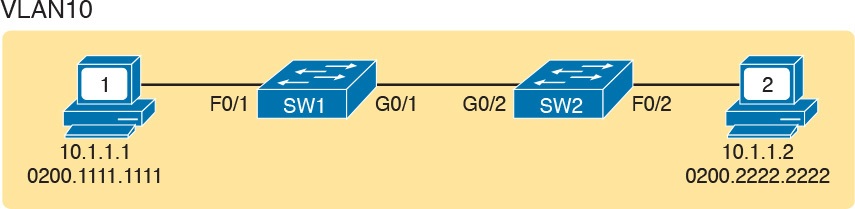

The process is relatively simple, on purpose; the simplicity lets each device send a large number of frames per second. The diagram below shows an example in which PC1 sends an Ethernet frame to PC2.

Example of Sending Data in a Modern Ethernet LAN

The steps in the diagram corresponds to below.

- PC1 builds and sends the original Ethernet frame, using its own MAC address as the source address and PC2’s MAC address as the destination address.

- Switch SW1 receives and forwards the Ethernet frame out its G0/1 interface (short for Gigabit interface 0/1) to SW2.

- Switch SW2 receives and forwards the Ethernet frame out its F0/2 interface (short for Fast Ethernet interface 0/2) to PC2.

- PC2 receives the frame, recognizes the destination MAC address as its own, and processes the frame.

The Ethernet network in the diagram uses full duplex on each link, but the concept might be difficult to see.

Full-duplex means that that the NIC or switch port has no half-duplex restrictions. So, to understand full duplex, you need to understand half duplex, as follows:

- Half duplex: The device must wait to send if it is currently receiving a frame; in other words, it cannot send and receive at the same time.

- Full duplex: The device does not have to wait before sending; it can send and receive at the same time.

So, with all PCs and LAN switches, and no LAN hubs, all the nodes can use full duplex.

All nodes can send and receive on their port at the same instant in time.

For example, in the above diagram, PC1 and PC2 could send frames to each other simultaneously, in both directions, without any half-duplex restrictions.

Using Half Duplex with LAN Hubs

To understand the need for half-duplex logic in some cases, you have to understand a little about an older type of networking device called a LAN hub.

When the IEEE first introduced 10BASE-T in 1990, the Ethernet did not yet include LAN switches.

Instead of switches, vendors created LAN hubs. The LAN hub provided a number of RJ-45 ports as a place to connect links to PCs, just like a LAN switch, but it used different rules for forwarding data.

LAN hubs forward data using physical layer standards, and are therefore considered to be Layer 1 devices.

When an electrical signal comes in one hub port, the hub repeats that electrical signal out all other ports (except the incoming port).

By doing so, the data reaches all the rest of the nodes connected to the hub, so the data hopefully reaches the correct destination.

The hub has no concept of Ethernet frames, of addresses, and so on.

The downside of using LAN hubs is that if two or more devices transmitted a signal at the same instant, the electrical signal collides and becomes garbled.

The hub repeats all received electrical signals, even if it receives multiple signals at the same time.

For example, the diagram below shows the idea, with PCs Archie and Bob sending an electrical signal at the same instant of time (at Steps 1A and 1B) and the hub repeating both electrical signals out toward Larry on the left (Step 2).

Collision Occurring Because of LAN Hub Behavior

If you replace the hub in diagram above with a LAN switch, the switch prevents the collision on the left.

The switch operates as a Layer 2 device, meaning that it looks at the data-link header and trailer.

A switch would look at the MAC addresses, and even if the switch needed to forward both frames to Larry on the left, the switch would send one frame and queue the other frame until the first frame was finished.

Now back to the issue created by the hub’s logic: collisions.

To prevent these collisions, the Ethernet nodes must use half-duplex logic instead of full-duplex logic.

A problem occurs only when two or more devices send at the same time; half-duplex logic tells the nodes that if someone else is sending, wait before sending.

In the diagram above, imagine that Archie began sending his frame early enough so that Bob received the first bits of that frame before Bob tried to send his own frame. Bob, at Step 1B, would notice that he was receiving a frame from someone else, and using half-duplex logic, would simply wait to send the frame listed at Step 1B.

Nodes that use half-duplex logic actually use a relatively well-known algorithm called carrier sense multiple access with collision detection (CSMA/CD).

The algorithm takes care of the obvious cases but also the cases caused by unfortunate timing.

For example, two nodes could check for an incoming frame at the exact same instant, both realize that no other node is sending, and both send their frames at the exact same instant, causing a collision.

CSMA/CD covers these cases as well, as follows:

- Step 1. A device with a frame to send listens until the Ethernet is not busy.

- Step 2. When the Ethernet is not busy, the sender begins sending the frame.

- Step 3. The sender listens while sending to discover whether a collision occurs; collisions might be caused by many reasons, including unfortunate timing. If a collision occurs, all currently sending nodes do the following:

- A. They send a jamming signal that tells all nodes that a collision happened.

- B. They independently choose a random time to wait before trying again, to avoid unfortunate timing.

- C. The next attempt starts again at Step 1.

Although most modern LANs do not often use hubs, and therefore do not need to use half duplex, enough old hubs still exist in enterprise networks so that you need to be ready to understand duplex issues.

Each NIC and switch port has a duplex setting. For all links between PCs and switches, or between switches, use full duplex.

However, for any link connected to a LAN hub, the connected LAN switch and NIC port should use half-duplex. Note that the hub itself does not use half-duplex logic, instead just repeating incoming signals out every other port.

The diagram below shows an example, with full-duplex links on the left and a single LAN hub on the right. The hub then requires SW2’s F0/2 interface to use half-duplex logic, along with the PCs connected to the hub.

Full and Half Duplex in an Ethernet LAN

Perspectives on IPv4 Subnetting

INTERNOLD NETWORKS CCNA LIVE WEBCLASS (INCLW)

Perspectives on IPv4 Subnetting

Perspectives on IPv4 Subnetting

Most entry-level networking jobs require you to operate and troubleshoot a network using a preexisting IP addressing and subnetting plan.

The CCNA Routing and Switching exams assess your readiness to use preexisting IP addressing and subnetting information to perform typical operations tasks, like monitoring the network, reacting to possible problems, and troubleshooting those problems.

However, you also need to understand how networks are designed and why.

The thought processes used when monitoring any network continually ask the question, “Is the network working as designed?”

If a problem exists, you must consider questions such as, “What happens when the network works normally, and what is different right now?”

Both questions require you to understand the intended design of the network, including details of the IP addressing and subnetting design.

This lesson provides some perspectives and answers for the bigger issues in IPv4 addressing.

What addresses can be used so that they work properly? What addresses should be used? When told to use certain numbers, what does that tell you about the choices made by some other network engineer?

How do these choices impact the practical job of configuring switches, routers, hosts, and operating the network on a daily basis?

This lesson hopes to answer these questions while revealing details of how IPv4 addresses work.

Introduction to Subnetting

Say you just happened to be at the sandwich shop when they were selling the world’s longest sandwich. You’re pretty hungry, so you go for it. Now you have one sandwich, but at over 2 kilometers long, you realize it’s a bit more than you need for lunch all by yourself.

To make the sandwich more useful (and more portable), you chop the sandwich into meal-size pieces, and give the pieces to other folks around you, who are also ready for lunch.

Huh? Well, subnetting, at least the main concept, is similar to this sandwich story. You start with one network, but it is just one large network.

As a single large entity, it might not be useful, and it is probably far too large. To make it useful, you chop it into smaller pieces, called subnets, and assign those subnets to be used in different parts of the enterprise internetwork.

This lesson introduces IP subnetting. First, it shows the general ideas behind a completed subnet design that indeed chops (or subnets) one network into subnets.

Subnetting Defined Through a Simple Example

An IP network—in other words, a Class A, B, or C network—is simply a set of consecutively numbered IP addresses that follows some preset rules.

These Class A, B, and C rules define that for a given network, all the addresses in the network have the same value in some of the octets of the addresses.

For example, Class B network 172.16.0.0 consists of all IP addresses that begin with 172.16: 172.16.0.0, 172.16.0.1, 172.16.0.2, and so on, through 172.16.255.255.

Another example: Class A network 10.0.0.0 includes all addresses that begin with 10.

An IP subnet is simply a subset of a Class A, B, or C network. If fact, the word subnet is a shortened version of the phrase subdivided network.

For example, one subnet of Class B network 172.16.0.0 could be the set of all IP addresses that begin with 172.16.1, and would include 172.16.1.0, 172.16.1.1, 172.16.1.2, and so on, up through 172.16.1.255.

Another subnet of that same Class B network could be all addresses that begin with 172.16.2.

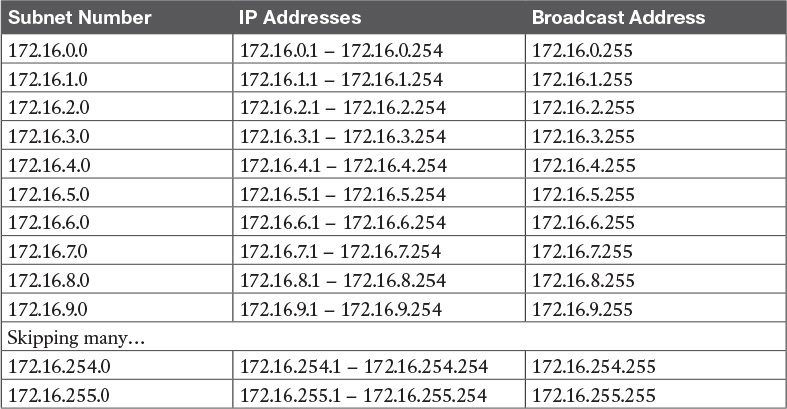

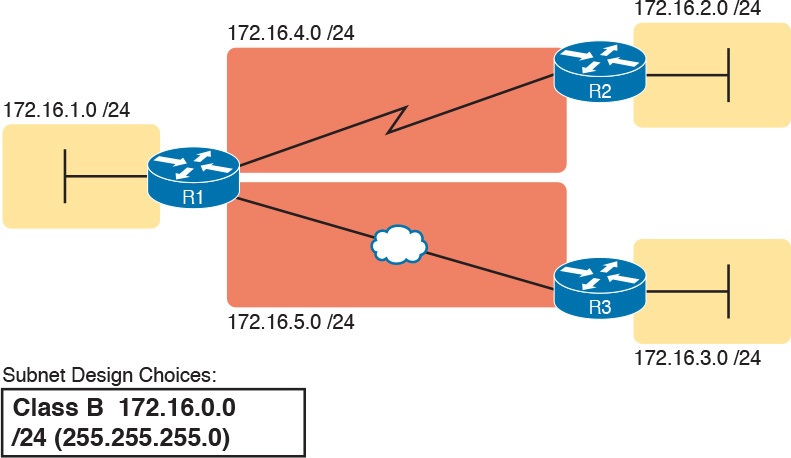

To give you a general idea, Figure 1 shows some basic documentation from a completed subnet design that could be used when an engineer subnets Class B network 172.16.0.0.

Figure 1: Subnet Plan Document

The design shows five subnets: one for each of the three LANs and one each for the two WAN links. The small text note shows the rationale used by the engineer for the subnets: Each subnet includes addresses that have the same value in the first three octets.

For example, for the LAN on the left, the number shows 172.16.1.__, meaning “all addresses that begin with 172.16.1.”

Also, note that the design, as shown, does not use all the addresses in Class B network 172.16.0.0, so the engineer has left plenty of room for growth.

Operational View Versus Design View of Subnetting

Most IT jobs require you to work with subnetting from an operational view. That is, someone else, before you got the job, designed how IP addressing and subnetting would work for that particular enterprise network. You need to interpret what someone else has already chosen.

To fully understand IP addressing and subnetting, you need to think about subnetting from both a design and operational perspective.

For example, Figure 1 simply states that in all these subnets, the first three octets must be equal. Why was that convention chosen? What alternatives exist? Would those alternatives be better for your internetwork today? All these questions relate more to subnetting design rather than to operation.

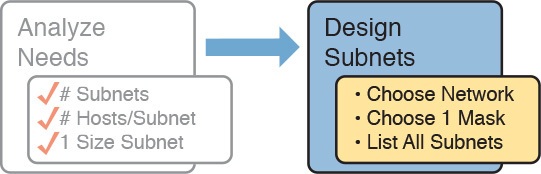

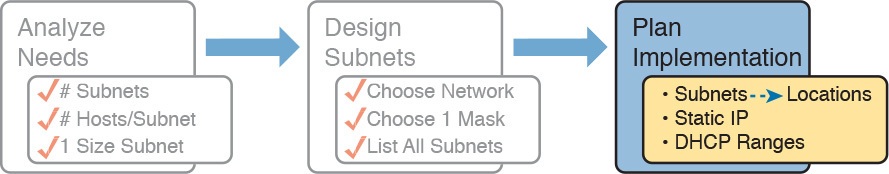

The remaining three main sections of this lesson examine each of the steps listed in Figure 2, in sequence.

Figure 2: Subnet Planning, Design, and Implementation Tasks

Analyze Subnetting and Addressing Needs

This section discusses the meaning of four basic questions that can be used to analyze the addressing and subnetting needs for any new or changing enterprise network:

- Which hosts should be grouped together into a subnet?

- How many subnets does this network require?

- How many host IP addresses does each subnet require?

- Will we use a single subnet size for simplicity, or not?

Rules About Which Hosts Are in Which Subnet

Every device that connects to an IP internetwork needs to have an IP address. These devices include computers used by end users, servers, mobile phones, laptops, IP phones, tablets, and networking devices like routers, switches, and firewalls. In short, any device that uses IP to send and receive packets needs an IP address.

NOTE: When discussing IP addressing, the term network has specific meaning: a Class A, B, or C IP network. To avoid confusion with that use of the term network, this lesson uses the terms internetwork and enterprise network when referring to a collection of hosts, routers, switches, and so on.

The IP addresses must be assigned according to some basic rules, and for good reasons. To make routing work efficiently, IP addressing rules group addresses into groups called subnets. The rules are as follows:

- Addresses in the same subnet are not separated by a router.

- Addresses in different subnets are separated by at least one router.

Figure 3 shows the general concept, with hosts A and B in one subnet and host C in another. In particular, note that hosts A and B are not separated from each other by any routers. However, host C, separated from A and B by at least one router, must be in a different subnet.

Figure 3: PC A and B in One Subnet, and PC C in a Different Subnet

The idea that hosts on the same link must be in the same subnet is much like the postal/zip code concept. All mailing addresses in the same town use the same postal code.

Addresses in another town, whether relatively nearby or on the other side of the country, have a different postal code. The postal code gives the postal service a better ability to automatically sort the mail to deliver it to the right location. For the same general reasons, hosts on the same LAN are in the same subnet, and hosts in different LANs are in different subnets.

Note that the point-to-point WAN link in the figure also needs a subnet. Figure 3 shows Router R1 connected to the LAN subnet on the left and to a WAN subnet on the right. Router R2 connects to that same WAN subnet. To do so, both R1 and R2 will have IP addresses on their WAN interfaces, and the addresses will be in the same subnet. (An Ethernet over MPLS [EoMPLS] WAN link has the same IP addressing needs, with each of the two routers having an IP address in the same subnet.)

The Ethernet LANs in Figure 3 also show a slightly different style of drawing, using simple lines with no Ethernet switch. Drawings of Ethernet LANs when the details of the LAN switches do not matter simply show each device connected to the same line, as shown in Figure 3. (This kind of drawing mimics the original Ethernet cabling before switches and hubs existed.)

Finally, because the routers’ main job is to forward packets from one subnet to another, routers typically connect to multiple subnets. For example, in this case, Router R1 connects to one LAN subnet on the left and one WAN subnet on the right. To do so, R1 will be configured with two different IP addresses, one per interface. These addresses will be in different subnets, because the interfaces connect the router to different subnets.

Determining the Number of Subnets

To determine the number of subnets required, the engineer must think about the internetwork as documented and count the locations that need a subnet. To do so, the engineer requires access to network diagrams, VLAN configuration details, and details about WAN links. For the types of links discussed in this course, you should plan for one subnet for every:

- VLAN

- Point-to-point serial link

- Ethernet emulation WAN link (EoMPLS)

NOTE: WAN technologies like MPLS allow subnetting options other than one subnet per pair of routers on the WAN, but this course only uses WAN technologies that have one subnet for each point-to-point WAN connection between two routers.

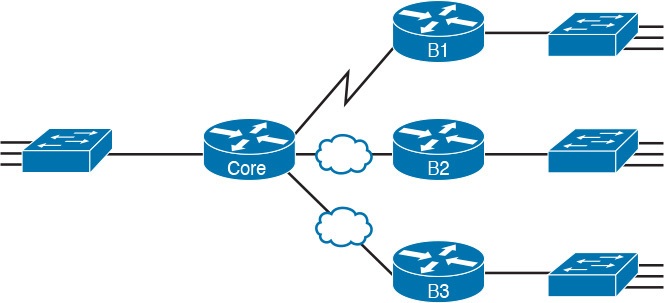

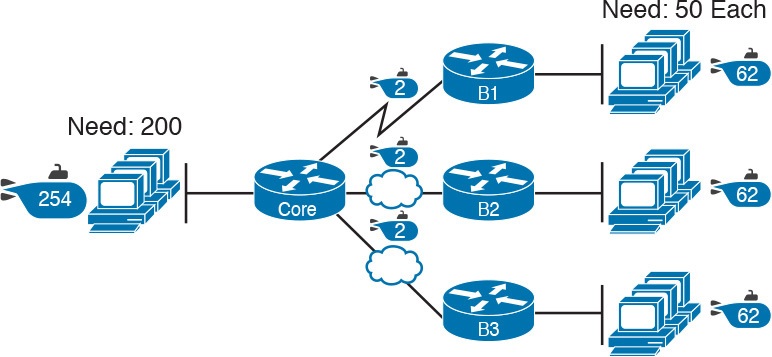

For example, imagine that the network planner has only Figure 4 on which to base the subnet design.

Figure 4: Four-Site Internetwork with Small Central Site

The number of subnets required cannot be fully predicted with only this figure. Certainly, three subnets will be needed for the WAN links, one per link. However, each LAN switch can be configured with a single VLAN, or with multiple VLANs. You can be certain that you need at least one subnet for the LAN at each site, but you might need more.

Next, consider the more detailed version of the same figure shown in Figure 5. In this case, the figure shows VLAN counts in addition to the same Layer 3 topology (the routers and the links connected to the routers). It also shows that the central site has many more switches, but the key fact on the left, regardless of how many switches exist, is that the central site has a total of 12 VLANs. Similarly, the figure lists each branch as having two VLANs. Along with the same three WAN subnets, this internetwork requires 21 subnets.

Figure 5: Four-Site Internetwork with Larger Central SiteFour-Site Internetwork with Larger Central Site

Finally, in a real job, you would consider the needs today as well as how much growth you expect in the internetwork over time. Any subnetting plan should include a reasonable estimate of the number of subnets you need to meet future needs.

Determining the Number of Hosts per Subnet

Determining the number of hosts per subnet requires knowing a few simple concepts and then doing a lot of research and questioning. Every device that connects to a subnet needs an IP address. For a totally new network, you can look at business plans—numbers of people at the site, devices on order, and so on—to get some idea of the possible devices. When expanding an existing network to add new sites, you can use existing sites as a point of comparison, and then find out which sites will get bigger or smaller. And don’t forget to count the router interface IP address in each subnet and the switch IP address used to remotely manage the switch.

Instead of gathering data for each and every site, planners often just use a few typical sites for planning purposes. For example, maybe you have some large sales offices and some small sales offices. You might dig in and learn a lot about only one large sales office and only one small sales office. Add that analysis to the fact that point-to-point links need a subnet with just two addresses, plus any analysis of more one-of-a-kind subnets, and you have enough information to plan the addressing and subnetting design.

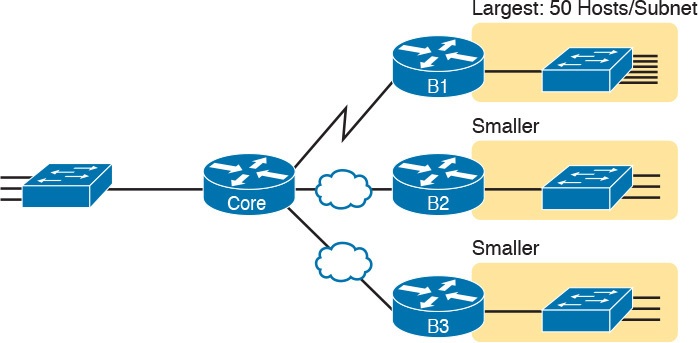

For example, in Figure 6, the engineer has built a diagram that shows the number of hosts per LAN subnet in the largest branch, B1. For the two other branches, the engineer did not bother to dig to find out the number of required hosts. As long as the number of required IP addresses at sites B2 and B3 stays below the estimate of 50, based on larger site B1, the engineer can plan for 50 hosts in each branch LAN subnet and have plenty of addresses per subnet.

Figure 6: Large Branch B1 with 50 Hosts/Subnet

One Size Subnet Fits All—Or Not

The final choice in the initial planning step is to decide whether you will use a simpler design by using a one-size-subnet-fits-all philosophy. A subnet’s size, or length, is simply the number of usable IP addresses in the subnet. A subnetting design can either use one size subnet, or varied sizes of subnets, with pros and cons for each choice.

Defining the Size of a Subnet

Before you finish this course, you will learn all the details of how to determine the size of the subnet. For now, you just need to know a few specific facts about the size of subnets.

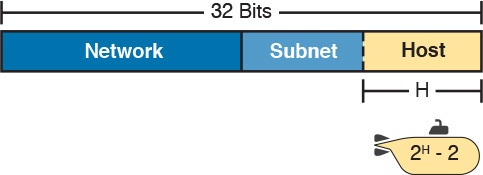

The engineer assigns each subnet a subnet mask, and that mask, among other things, defines the size of that subnet. The mask sets aside a number of host bits whose purpose is to number different host IP addresses in that subnet. Because you can number 2x things with x bits, if the mask defines H host bits, the subnet contains 2H – 2 unique numeric values.

However, the subnet’s size is not 2H. It’s 2H – 2, because two numbers in each subnet are reserved for other purposes. Each subnet reserves the numerically lowest value for the subnet number and the numerically highest value as the subnet broadcast address. As a result, the number of usable IP addresses per subnet is 2H – 2.

NOTE: The terms subnet number, subnet ID, and subnet address all refer to the number that represents or identifies a subnet.

Figure 7 shows the general concept behind the three-part structure of an IP address, focusing on the host part and the resulting subnet size.

Figure 7: Subnet Size Concepts

One-Size Subnet Fits All

To choose to use a single-size subnet in an enterprise network, you must use the same mask for all subnets, because the mask defines the size of the subnet. But which mask?

One requirement to consider when choosing that one mask is this: That one mask must provide enough host IP addresses to support the largest subnet. To do so, the number of host bits (H) defined by the mask must be large enough so that 2H – 2 is larger than (or equal to) the number of host IP addresses required in the largest subnet.

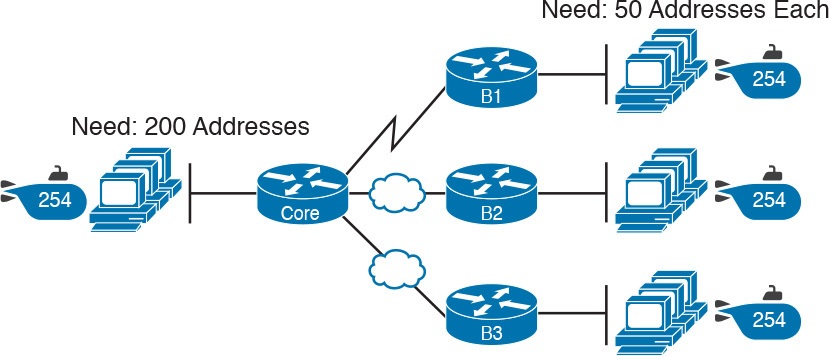

For example, consider Figure 8. It shows the required number of hosts per LAN subnet. (The figure ignores the subnets on the WAN links, which require only two IP addresses each.) The branch LAN subnets require only 50 host addresses, but the main site LAN subnet requires 200 host addresses. To accommodate the largest subnet, you need at least 8 host bits. Seven host bits would not be enough, because 27 – 2 = 126. Eight host bits would be enough, because 28 – 2 = 254, which is more than enough to support 200 hosts in a subnet.

Figure 8: Network Using One Subnet Size

What’s the big advantage when using a single-size subnet? Operational simplicity. In other words, keeping it simple. Everyone on the IT staff who has to work with networking can get used to working with one mask—and one mask only. They will be able to answer all subnetting questions more easily, because everyone gets used to doing subnetting math with that one mask.

The big disadvantage for using a single-size subnet is that it wastes IP addresses. For example, in Figure 8, all the branch LAN subnets support 254 addresses, while the largest branch subnet needs only 50 addresses. The WAN subnets only need two IP addresses, but each supports 254 addresses, again wasting more IP addresses.

The wasted IP addresses do not actually cause a problem in most cases, however. Most organizations use private IP networks in their enterprise internetworks, and a single Class A or Class B private network can supply plenty of IP addresses, even with the waste.

Multiple Subnet Sizes (Variable-Length Subnet Masks)

To create multiple sizes of subnets in one Class A, B, or C network, the engineer must create some subnets using one mask, some with another, and so on. Different masks mean different numbers of host bits, and a different number of hosts in some subnets based on the 2H – 2 formula.

For example, consider the requirements listed earlier in Figure 8. It showed one LAN subnet on the left that needs 200 host addresses, three branch subnets that need 50 addresses, and three WAN links that need two addresses. To meet those needs, but waste fewer IP addresses, three subnet masks could be used, creating subnets of three different sizes, as shown in Figure 9.

Figure 9: Three Masks, Three Subnet Sizes

The smaller subnets now waste fewer IP addresses compared to the design shown earlier in Figure 8. The subnets on the right that need 50 IP addresses have subnets with 6 host bits, for 26 – 2 = 62 available addresses per subnet. The WAN links use masks with 2 host bits, for 22 – 2 = 2 available addresses per subnet.

However, some are still wasted, because you cannot set the size of the subnet as some arbitrary size. All subnets will be a size based on the 2H – 2 formula, with H being the number of host bits defined by the mask for each subnet.

One-Size Subnet Fits All (Mostly)

For the most part, this course explains subnetting using designs that use a single mask, creating a single subnet size for all subnets. Why? First, it makes the process of learning subnetting easier. Second, some types of analysis that you can do about a network—specifically, calculating the number of subnets in the classful network—only make sense when a single mask is used.

However, you still need to be ready to work with variable-length subnet masks (VLSM), which is the practice of using different masks for different subnets in the same classful IP network. However, all the examples and discussion up until that lesson purposefully avoid VLSM just to keep the discussion simpler, for the sake of learning to walk before you run.

Make Design Choices

Now that you know how to analyze the IP addressing and subnetting needs, the next major step examines how to apply the rules of IP addressing and subnetting to those needs and make some choices. In other words, now that you know how many subnets you need and how many host addresses you need in the largest subnet, how do you create a useful subnetting design that meets those requirements? The short answer is that you need to do the three tasks shown on the right side of Figure 10.

Figure 10: Input to the Design Phase, and Design Questions to Answer

Choose a Classful Network

In the original design for what we know of today as the Internet, companies used registered public classful IP networks when implementing TCP/IP inside the company. By the mid- 1990s, an alternative became more popular: private IP networks. This section discusses the background behind these two choices, because it impacts the choice of what IP network a company will then subnet and implement in its enterprise internetwork.

Public IP Networks

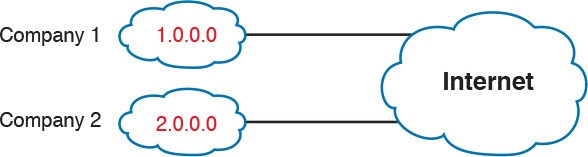

The original design of the Internet required that any company that connected to the Internet had to use a registered public IP network. To do so, the company would complete some paperwork, describing the enterprise’s internetwork and the number of hosts existing, plus plans for growth. After submitting the paperwork, the company would receive an assignment of either a Class A, B, or C network.

Public IP networks, and the administrative processes surrounding them, ensure that all the companies that connect to the Internet all use unique IP addresses. In particular, after a public IP network is assigned to a company, only that company should use the addresses in that network. That guarantee of uniqueness means that Internet routing can work well, because there are no duplicate public IP addresses.

For example, consider the example shown in Figure 11. Company 1 has been assigned public Class A network 1.0.0.0, and company 2 has been assigned public Class A network 2.0.0.0. Per the original intent for public addressing in the Internet, after these public network assignments have been made, no other companies can use addresses in Class A networks 1.0.0.0 or 2.0.0.0.

Figure 11: Two Companies with Unique Public IP Networks

This original address assignment process ensured unique IP addresses across the entire planet. The idea is much like the fact that your telephone number should be unique in the universe, your postal mailing address should also be unique, and your email address should also be unique. If someone calls you, your phone rings, but no one else’s phone rings. Similarly, if company 1 is assigned Class A network 1.0.0.0, and it assigns address 1.1.1.1 to a particular PC, that address should be unique in the universe. A packet sent through the Internet to destination 1.1.1.1 should only arrive at this one PC inside company 1, instead of being delivered to some other host.

Growth Exhausts the Public IP Address Space

By the early 1990s, the world was running out of public IP networks that could be assigned. During most of the 1990s, the number of hosts newly connected to the Internet was growing at a double-digit pace, per month. Companies kept following the rules, asking for public IP networks, and it was clear that the current address-assignment scheme could not continue without some changes. Simply put, the number of Class A, B, and C networks supported by the 32-bit address in IP version 4 (IPv4) was not enough to support one public classful network per organization, while also providing enough IP addresses in each company.

NOTE: The universe has run out of public IPv4 addresses in a couple of significant ways. IANA, which assigns public IPv4 address blocks to the five Regional Internet Registries (RIR) around the globe, assigned the last of the IPv4 address space in early 2011. By 2015, ARIN, the RIR for North America, exhausted its supply of IPv4 addresses, so that companies must return unused public IPv4 addresses to ARIN before they have more to assign to new companies. Try an online search for “ARIN depletion” to see pages about the current status of available IPv4 address space for just one RIR example.

The Internet community worked hard during the 1990s to solve this problem, coming up with several solutions, including the following:

- A new version of IP (IPv6), with much larger addresses (128 bit)

- Assigning a subset of a public IP network to each company, instead of an entire public IP network, to reduce waste

- Network Address Translation (NAT), which allows the use of private IP networks

These three solutions matter to real networks today. However, to stay focused on the topic of subnet design, this lesson focuses on the third option, and in particular, the private IP networks that can be used by an enterprise when also using NAT.

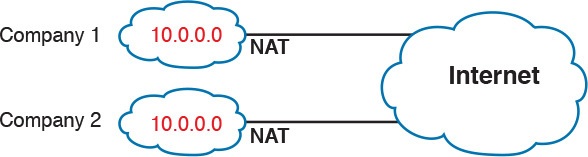

Focusing on the third item in the bullet list, NAT allows multiple companies to use the exact same private IP network, using the same IP addresses as other companies while still connecting to the Internet. For example, Figure 12 shows the same two companies connecting to the Internet as in Figure 11, but now with both using the same private Class A network 10.0.0.0.

Figure 12: Reusing the Same Private Network 10.0.0.0 with NAT

Both companies use the same classful IP network (10.0.0.0). Both companies can implement their subnet design internal to their respective enterprise internetworks, without discussing their plans. The two companies can even use the exact same IP addresses inside network 10.0.0.0. And amazingly, at the same time, both companies can even communicate with each other through the Internet.

The technology called Network Address Translation makes it possible for companies to reuse the same IP networks, as shown in Figure 12. NAT does this by translating the IP addresses inside the packets as they go from the enterprise to the Internet, using a small number of public IP addresses to support tens of thousands of private IP addresses. For now, accept that most companies use NAT, and therefore, they can use private IP networks for their internetworks.

Private IP Networks

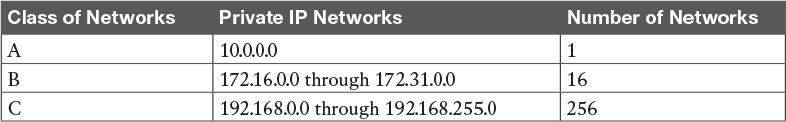

Request For Comments (RFC) 1918 defines the set of private IP networks, as listed in Table 1. By definition, these private IP networks

- Will never be assigned to an organization as a public IP network

- Can be used by organizations that will use NAT when sending packets into the Internet

- Can also be used by organizations that never need to send packets into the Internet

So, when using NAT—and almost every organization that connects to the Internet uses NAT—the company can simply pick one or more of the private IP networks from the list of reserved private IP network numbers. RFC 1918 defines the list, which is summarized in Table 1.

Table 1: RFC 1918 Private Address Space

NOTE: According to an informal survey I ran on my blog a few years back, about half of the respondents said that their networks use private Class A network 10.0.0.0, as opposed to other private networks or public networks.

Choosing an IP Network During the Design Phase

Today, some organizations use private IP networks along with NAT, and some use public IP networks. Most new enterprise internetworks use private IP addresses throughout the network, along with NAT, as part of the connection to the Internet. Those organizations that already have registered public IP networks—often obtained before the addresses started running short in the early 1990s—can continue to use those public addresses throughout their enterprise networks.

After the choice to use a private IP network has been made, just pick one that has enough IP addresses. You can have a small internetwork and still choose to use private Class A network 10.0.0.0. It might seem wasteful to choose a Class A network that has over 16 million IP addresses, especially if you only need a few hundred. However, there’s no penalty or problem with using a private network that is too large for your current or future needs.

For the purposes of this course, most examples use private IP network numbers. For the design step to choose a network number, just choose a private Class A, B, or C network from the list of RFC 1918 private networks.

Regardless, from a math and concept perspective, the methods to subnet a public IP network versus a private IP network are the same.

Choose the Mask

If a design engineer followed the topics in this lesson so far, in order, he would know the following:

- The number of subnets required

- The number of hosts/subnet required

- That a choice was made to use only one mask for all subnets, so that all subnets are the same size (same number of hosts/subnet)

- The classful IP network number that will be subnetted

This section completes the design process, at least the parts described in this lesson, by discussing how to choose that one mask to use for all subnets. First, this section examines default masks, used when a network is not subnetted, as a point of comparison. Next, the concept of borrowing host bits to create subnet bits is explored. Finally, this section ends with an example of how to create a subnet mask based on the analysis of the requirements.

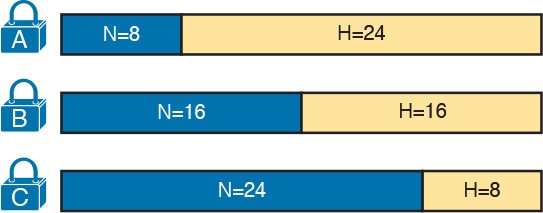

Classful IP Networks Before Subnetting

Before an engineer subnets a classful network, the network is a single group of addresses. In other words, the engineer has not yet subdivided the network into many smaller subsets called subnets.

When thinking about an unsubnetted classful network, the addresses in a network have only two parts: the network part and host part. Comparing any two addresses in the classful network:

The addresses have the same value in the network part.

The addresses have different values in the host part.

The actual sizes of the network and host part of the addresses in a network can be easily predicted, as shown in Figure 13.

In Figure 13, N and H represent the number of network and host bits, respectively. Class rules define the number of network octets (1, 2, or 3) for Classes A, B, and C, respectively; the figure shows these values as a number of bits. The number of host octets is 3, 2, or 1, respectively.

Continuing the analysis of classful network before subnetting, the number of addresses in one classful IP network can be calculated with the same 2H – 2 formula previously discussed. In particular, the size of an unsubnetted Class A, B, or C network is as follows:

- Class A: 224 – 2 = 16,777,214

- Class B: 216 – 2 = 65,534

- Class C: 28 – 2 = 254

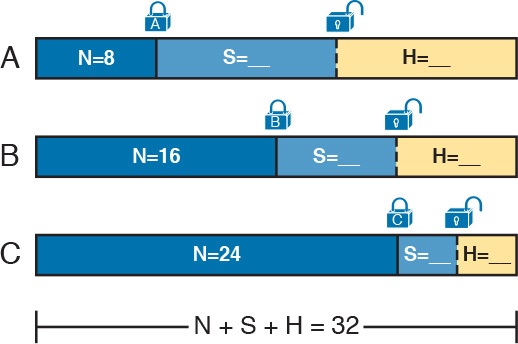

Borrowing Host Bits to Create Subnet Bits

To subnet a network, the designer thinks about the network and host parts, as shown in Figure 13, and then the engineer adds a third part in the middle: the subnet part. However, the designer cannot change the size of the network part or the size of the entire address (32 bits). To create a subnet part of the address structure, the engineer borrows bits from the host part. Figure 14 shows the general idea.

Figure 14: Concept of Borrowing Host Bits

Figure 14 shows a rectangle that represents the subnet mask. N, representing the number of network bits, remains locked at 8, 16, or 24, depending on the class. Conceptually, the designer moves a (dashed) dividing line into the host field, with subnet bits (S) between the network and host parts, and the remaining host bits (H) on the right. The three parts must add up to 32, because IPv4 addresses consist of 32 bits.

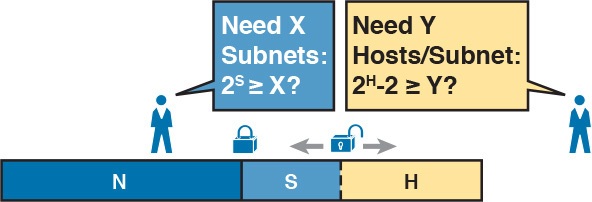

Choosing Enough Subnet and Host Bits

The design process requires a choice of where to place the dashed line shown in Figure 14. But what is the right choice? How many subnet and host bits should the designer choose? The answers hinge on the requirements gathered in the early stages of the planning process:

- Number of subnets required

- Number of hosts/subnet

The bits in the subnet part create a way to uniquely number the different subnets that the design engineer wants to create. With 1 subnet bit, you can number 21 or 2 subnets. With 2 bits, 22 or 4 subnets, with 3 bits, 23 or 8 subnets, and so on. The number of subnet bits must be large enough to uniquely number all the subnets, as determined during the planning process.

At the same time, the remaining number of host bits must also be large enough to number the host IP addresses in the largest subnet. Remember, in this lesson, we assume the use of a single mask for all subnets. This single mask must support both the required number of subnets and the required number of hosts in the largest subnet. Figure 15 shows the concept.

Figure 15: Borrowing Enough Subnet and Host Bits

Figure 15 shows the idea of the designer choosing a number of subnet (S) and host (H) bits and then checking the math. 2S must be more than the number of required subnets, or the mask will not supply enough subnets in this IP network. Also, 2H – 2 must be more than the required number of hosts/subnet.

NOTE: The idea of calculating the number of subnets as 2S applies only in cases where a single mask is used for all subnets of a single classful network, as is being assumed in this lesson.

To effectively design masks, or to interpret masks that were chosen by someone else, you need a good working memory of the powers of 2. Use Numeric Reference Tables for your reference.

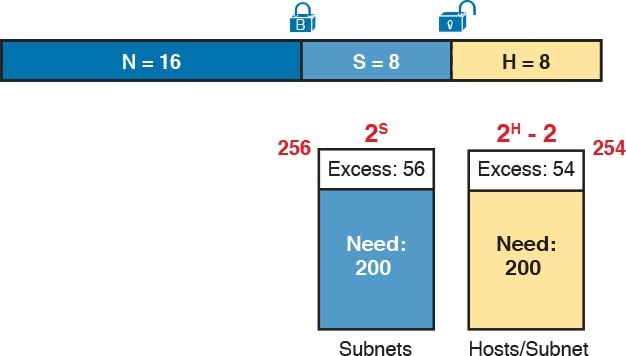

Example Design: 172.16.0.0, 200 Subnets, 200 Hosts

To help make sense of the theoretical discussion so far, consider an example that focuses on the design choice for the subnet mask. In this case, the planning and design choices so far tell us the following

- Use a single mask for all subnets.

- Plan for 200 subnets.

- Plan for 200 host IP addresses per subnet.

- Use private Class B network 172.16.0.0.

To choose the mask, the designer asks this question:

How many subnet (S) bits do I need to number 200 subnets?

From Table 2, you can see that S = 7 is not large enough (27 = 128), but S = 8 is enough (28 = 256). So, you need at least 8 subnet bits.

Table 2: First Ten Subnets, Plus the Last Few, from 172.16.0.0, 255.255.255.0

Next, the designer asks a similar question, based on the number of hosts per subnet:

How many host (H) bits do I need to number 200 hosts per subnet?

The math is basically the same, but the formula subtracts 2 when counting the number of hosts/subnet. From Table 2, you can see that H = 7 is not large enough (27 – 2 = 126), but H = 8 is enough (28 – 2 = 254).

Only one possible mask meets all the requirements in this case. First, the number of network bits (N) must be 16, because the design uses a Class B network. The requirements tell us that the mask needs at least 8 subnet bits, and at least 8 host bits. The mask only has 32 bits in it; Figure 16 shows the resulting mask.

Figure 16: Example Mask Choice, N = 16, S = 8, H = 8

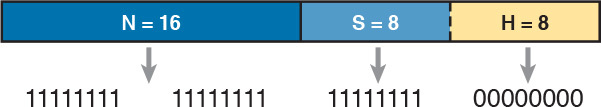

Masks and Mask Formats

Although engineers think about IP addresses in three parts when making design choices (network, subnet, and host), the subnet mask gives the engineer a way to communicate those design choices to all the devices in the subnet.

The subnet mask is a 32-bit binary number with a number of binary 1s on the left and with binary 0s on the right. By definition, the number of binary 0s equals the number of host bits; in fact, that is exactly how the mask communicates the idea of the size of the host part of the addresses in a subnet. The beginning bits in the mask equal binary 1, with those bit positions representing the combined network and subnet parts of the addresses in the subnet.

Because the network part always comes first, then the subnet part, and then the host part, the subnet mask, in binary form, cannot have interleaved 1s and 0s. Each subnet mask has one unbroken string of binary 1s on the left, with the rest of the bits as binary 0s.

After the engineer chooses the classful network and the number of subnet and host bits in a subnet, creating the binary subnet mask is easy. Just write down N 1s, S 1s, and then H 0s (assuming that N, S, and H represent the number of network, subnet, and host bits). Figure 17 shows the mask based on the previous example, which subnets a Class B network by creating 8 subnet bits, leaving 8 host bits.

Figure 17: Creating the Subnet Mask—Binary—Class B Network

In addition to the binary mask shown in Figure 17, masks can also be written in two other formats: the familiar dotted-decimal notation (DDN) seen in IP addresses and an even briefer prefix notation.

Build a List of All Subnets

This final task of the subnet design step determines the actual subnets that can be used, based on all the earlier choices. The earlier design work determined the Class A, B, or C network to use, and the (one) subnet mask to use that supplies enough subnets and enough host IP addresses per subnet. But what are those subnets? How do you identify or describe a subnet? This section answers these questions.

A subnet consists of a group of consecutive numbers. Most of these numbers can be used as IP addresses by hosts. However, each subnet reserves the first and last numbers in the group, and these two numbers cannot be used as IP addresses. In particular, each subnet contains the following:

Subnet number: Also called the subnet ID or subnet address, this number identifies the subnet. It is the numerically smallest number in the subnet. It cannot be used as an IP address by a host.

Subnet broadcast: Also called the subnet broadcast address or directed broadcast address, this is the last (numerically highest) number in the subnet. It also cannot be used as an IP address by a host.

IP addresses: All the numbers between the subnet ID and the subnet broadcast address can be used as a host IP address.

For example, consider the earlier case in which the design results were as follows:

- Network 172.16.0.0 (Class B)

- Mask 255.255.255.0 (for all subnets)

With some math, the facts about each subnet that exists in this Class B network can be calculated. In this case, Table 2 shows the first ten such subnets. It then skips many subnets and shows the last two (numerically largest) subnets.

After you have the network number and the mask, calculating the subnet IDs and other details for all subnets requires some math. In real life, most people use subnet calculators or subnet-planning tools.

Plan the Implementation

The next step, planning the implementation, is the last step before actually configuring the devices to create a subnet. The engineer first needs to choose where to use each subnet. For example, at a branch office in a particular city, which subnet from the subnet planning chart (Table 2) should be used for each VLAN at that site? Also, for any interfaces that require static IP addresses, which addresses should be used in each case? Finally, what range of IP addresses from inside each subnet should be configured in the DHCP server, to be dynamically leased to hosts for use as their IP address? Figure 18 summarizes the list of implementation planning tasks.

Figure 18: Facts Supplied to the Plan Implementation Step

Assigning Subnets to Different Locations

The job is simple: Look at your network diagram, identify each location that needs a subnet, and pick one from the table you made of all the possible subnets. Then, track it so that you know which ones you use where, using a spreadsheet or some other purpose-built subnet-planning tool. That’s it! Figure 19 shows a sample of a completed design using Table 2, which happens to match the initial design sample shown way back in Figure 1.

Figure 19: Example of Subnets Assigned to Different Locations

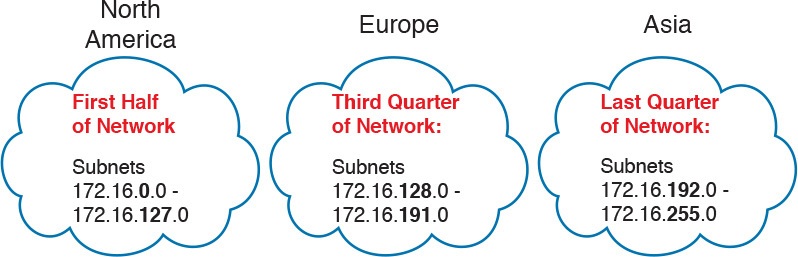

Although this design could have used any five subnets from Table 2, in real networks, engineers usually give more thought to some strategy for assigning subnets. For example, you might assign all LAN subnets lower numbers and WAN subnets higher numbers. Or you might slice off large ranges of subnets for different divisions of the company. Or you might follow that same strategy, but ignore organizational divisions in the company, paying more attention to geographies.

For example, for a U.S.-based company with a smaller presence in both Europe and Asia, you might plan to reserve ranges of subnets based on continent. This kind of choice is particularly useful when later trying to use a feature called route summarization.

Figure 20 shows the general benefit of placing addressing in the network for easier route summarization, using the same subnets from Table 2 again.

Figure 20: Reserving 50 Percent of Subnets for North America and 25 Percent Each for Europe and Asia

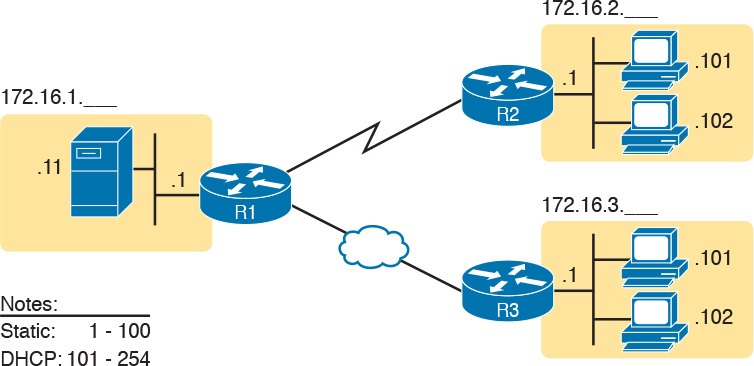

Choose Static and Dynamic Ranges per Subnet

Devices receive their IP address and mask assignment in one of two ways: dynamically by using Dynamic Host Configuration Protocol (DHCP) or statically through configuration. For DHCP to work, the network engineer must tell the DHCP server the subnets for which it must assign IP addresses. In addition, that configuration limits the DHCP server to only a subset of the addresses in the subnet. For static addresses, you simply configure the device to tell it what IP address and mask to use.

To keep things as simple as possible, most shops use a strategy to separate the static IP addresses on one end of each subnet, and the DHCP-assigned dynamic addresses on the other. It does not really matter whether the static addresses sit on the low end of the range of addresses or the high end.

For example, imagine that the engineer decides that, for the LAN subnets in Figure 19, the DHCP pool comes from the high end of the range, namely, addresses that end in .101 through .254. (The address that ends in .255 is, of course, reserved.) The engineer also assigns static addresses from the lower end, with addresses ending in .1 through .100. Figure 21 shows the idea.

Figure 21: Static from the Low End and DHCP from the High End

Figure 21 shows all three routers with statically assigned IP addresses that end in .1. The only other static IP address in the figure is assigned to the server on the left, with address 172.16.1.11 (abbreviated simply as .11 in the figure).

On the right, each LAN has two PCs that use DHCP to dynamically lease their IP addresses. DHCP servers often begin by leasing the addresses at the bottom of the range of addresses, so in each LAN, the hosts have leased addresses that end in .101 and .102, which are at the low end of the range chosen by design.

Troubleshooting Ethernet LANs

INTERNOLD NETWORKS CCNA LIVE WEBCLASS (INCLW)

Troubleshooting Ethernet LANs

Troubleshooting Ethernet LANs

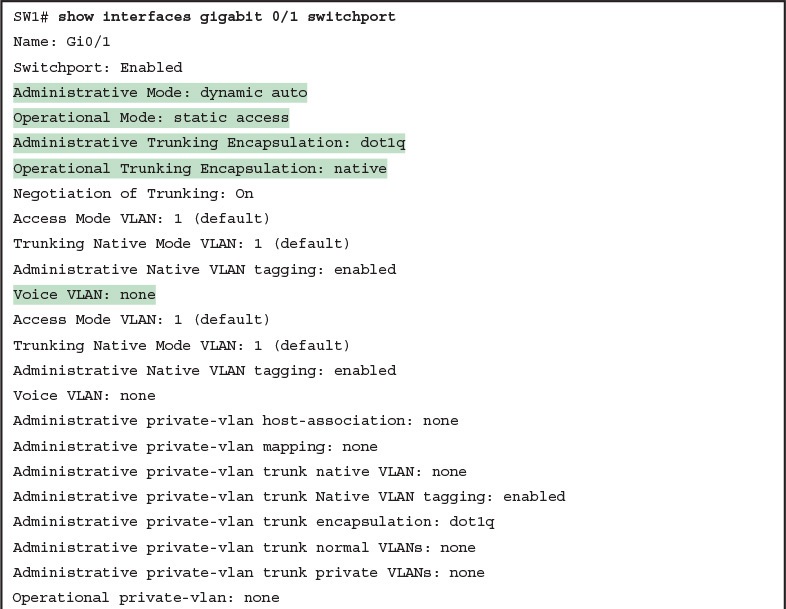

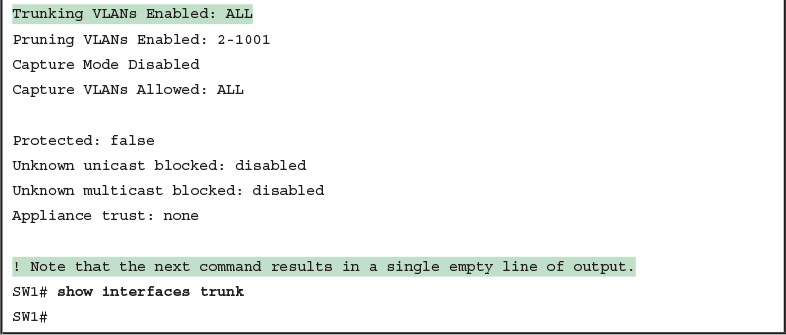

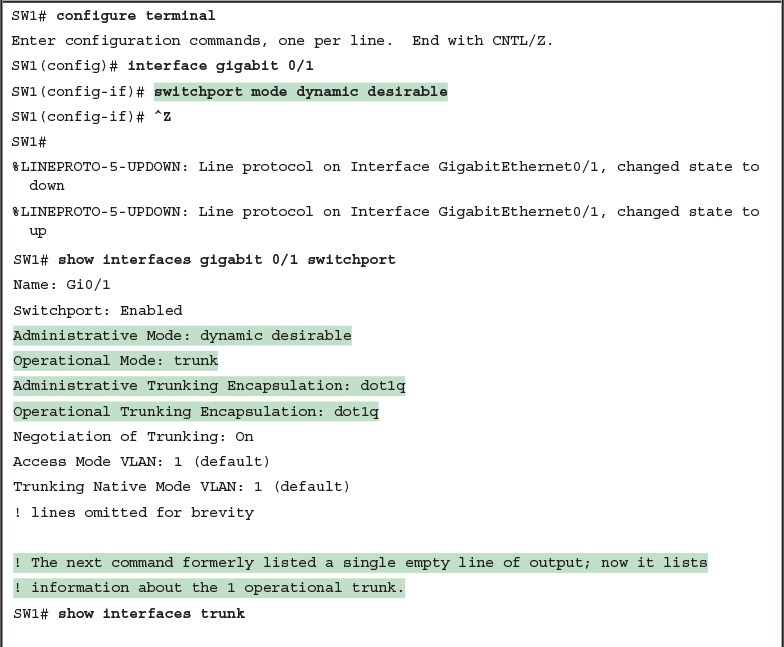

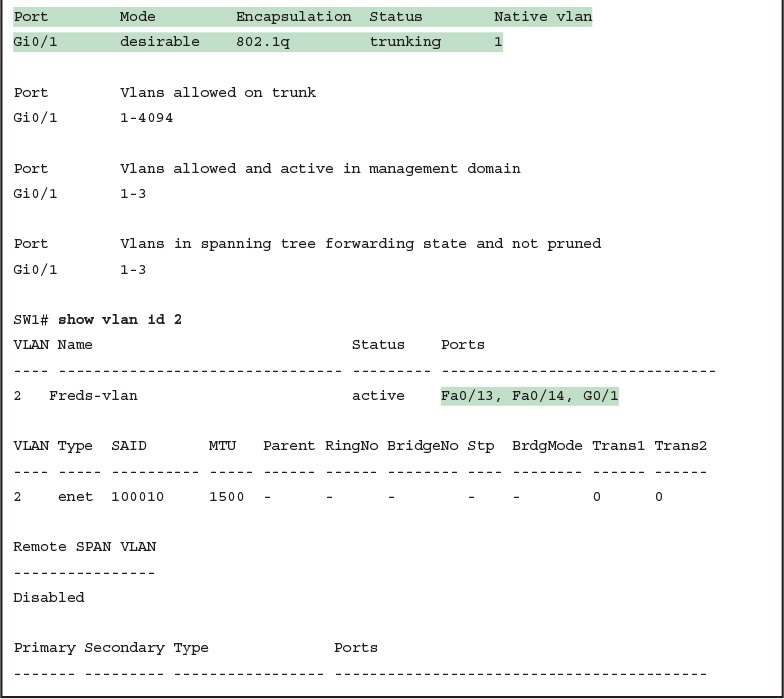

This lesson focuses on the processes of verification and troubleshooting.

Verification refers to the process of confirming whether a network is working as designed.

Troubleshooting refers to the follow-on process that occurs when the network is not working as designed, by trying to determine the real reason why the network is not working correctly, so that it can be fixed.

Sometimes, when people take their first Cisco exam, they are surprised at the number of verification and troubleshooting questions. Each of these questions requires you to apply networking knowledge to unique problems, rather than just being ready to answer questions about lists of facts that you’ve memorized. You need to have skills beyond simply remembering a lot of facts.

This lesson discusses a wide number of topics.

- Analyzing switch interfaces and cabling

- Predicting where switches will forward frames

- Troubleshooting port security

- Analyzing VLANs and VLAN trunks

Perspectives on Applying Troubleshooting Methodologies

This first section of the lesson takes a brief diversion for one particular big idea: what troubleshooting processes could be used to resolve networking problems? Most CCNA Routing and Switching exam topics list the word “troubleshoot” along with some technology, mostly with some feature that you configure on a switch or router. One exam topic makes mention of the troubleshooting process. This first section examines the troubleshooting process as an end to itself.

The first important perspective on the troubleshooting process is this: you can troubleshoot using any process or method that you want. However, all good methods have the same characteristics that result in faster resolution of the problem, and a better chance of avoiding that same problem in the future.

The one exam topic that mentions troubleshooting methods uses some pretty common terminology found in troubleshooting methods both inside IT and in other industries as well.

The ideas make good common sense. From the exam topics:

- Step 1. Problem isolation and documentation: Problem isolation refers to the process of taking what you know about a possible issue, confirming that there is a problem, and determining which devices and cables could be part of the problem, and which ones are not part of the problem. This step also works best when the person troubleshooting the problem documents what they find, typically in a problem tracking system.

- Step 2. Resolve or escalate: Problem isolation should eventually uncover the root cause of the problem—that is, the cause which, if fixed, will resolve the problem. In short, resolving the problem means finding the root cause of the problem and fixing that problem. Of course, what do you do if you cannot find the root cause, or fix (resolve) that root cause once found? Escalate the problem. Most companies have a defined escalation process, with different levels of technical support and management support depending on whether the next step requires more technical expertise or management decision making.

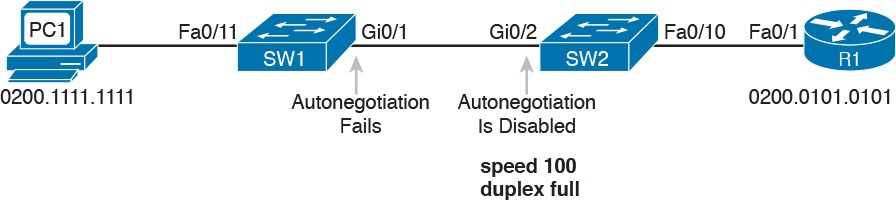

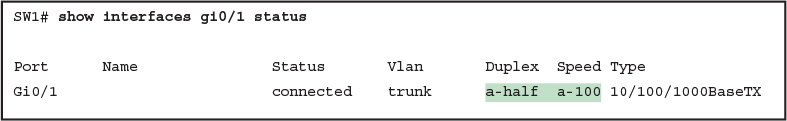

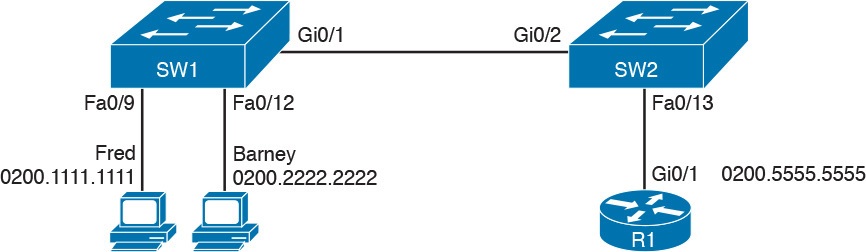

- Step 3. Verify or monitor: You hear of a problem, you isolate the problem, document it, determine a possible root cause, and you try to resolve it. Now you need to verify that it really worked. In some cases, that may mean that you just do a few show commands. In other cases, you may need to keep an eye on it over a period of time, especially when you do not know what caused the root problem in the first place.